Trending in Mathematics

Adaptive Delayed-Update Cyclic Algorithm for Variational Inequalities

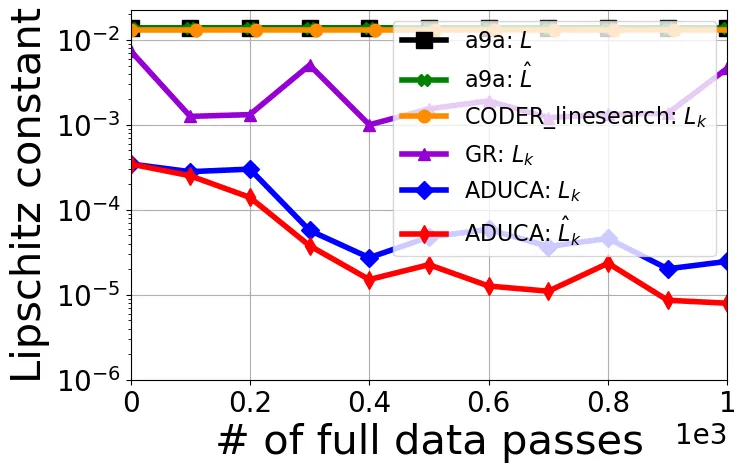

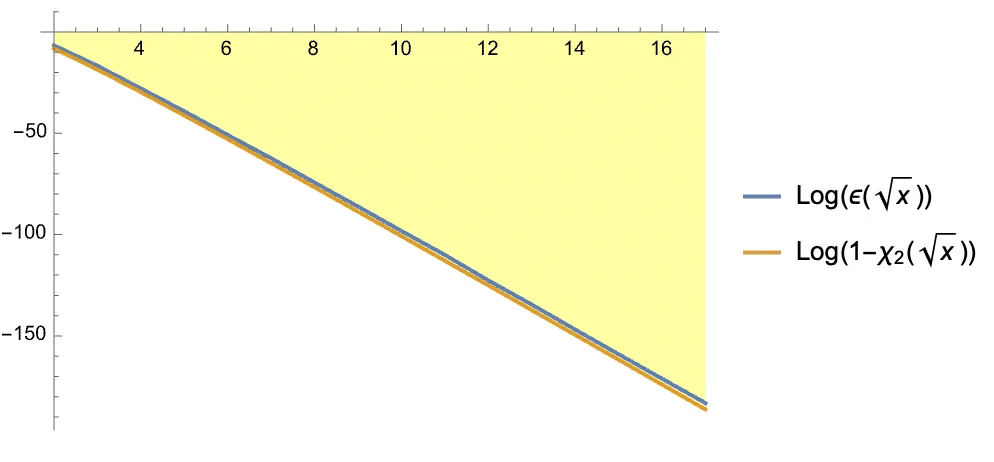

Cyclic block coordinate methods are a fundamental class of first-order algorithms, widely used in practice for their simplicity and strong empirical performance. Yet, their theoretical behavior remains challenging to explain, and setting their step sizes -- beyond classical coordinate descent for minimization -- typically requires careful tuning or line-search machinery. In this work, we develop $\texttt{ADUCA}$ (Adaptive Delayed-Update Cyclic Algorithm), a cyclic algorithm addressing a broad class of Minty variational inequalities with monotone Lipschitz operators. $\texttt{ADUCA}$ is parameter-free: it requires no global or block-wise Lipschitz constants and uses no per-epoch line search, except at initialization. A key feature of the algorithm is using operator information delayed by a full cycle, which makes the algorithm compatible with parallel and distributed implementations, and attractive due to weakened synchronization requirements across blocks. We prove that $\texttt{ADUCA}$ attains (near) optimal global oracle complexity as a function of target error $ε>0,$ scaling with $1/ε$ for monotone operators, or with $\log^2(1/ε)$ for operators that are strongly monotone.

2603.29128

Mar 2026Optimization and Control

2603.14601

2603.14601$K-$means with leraned metrics

We study the Fréchet {\it k-}means of a metric measure space when both the measure and the distance are unknown and have to be estimated. We prove a general result that states that the {\it k-}means are continuous with respect to the measured Gromov-Hausdorff topology. In this situation, we also prove a stability result for the Voronoi clusters they determine. We do not assume uniqueness of the set of {\it k-}means, but when it is unique, the results are stronger. {This framework provides a unified approach to proving consistency for a wide range of metric learning procedures. As concrete applications, we obtain new consistency results for several important estimators that were previously unestablished, even when $k=1$. These include {\it k-}means based on: (i) Isomap and Fermat geodesic distances on manifolds, (ii) difussion distances, (iii) Wasserstein distances computed with respect to learned ground metrics. Finally, we consider applications beyond the statistical inference paradigm like (iv) first passage percolation and (v) discrete approximations of length spaces.}

2603.14601

Mar 2026Statistics Theory

2603.15074

2603.15074A Yamabe problem for the quotient between the $Q$ curvature and the scalar curvature

In this paper we introduce the following Yamabe problem for the quotient between the $Q$ curvature and the scalar curvature $R$: Find a conformal metric $g$ in a given conformal class $[g_0]$ with \[ Q_g/R_g=const. \] When the dimension $n\ge 5$, we first prove a new Sobolev inequality between the total $Q$-curvature and the total scalar curvature on $\mathbb{S}^n$ ($n\ge 5$), namely \[\frac{\int_{\mathbb{S}^n} Q_g d v_g}{\left(\int_{\mathbb{S}^n} R_g d v_g\right)^{\frac{n-4}{n-2}}} \geq \frac{\int_{\mathbb{S}^n} Q_{g_{\mathbb{S}^n}} d v\left(g_{\mathbb{S}^n}\right)}{\left(\int_{\mathbb{S}^n} R_{g_{\mathbb{S}^n}} d v\left(g_{\mathbb{S}^n}\right)\right)^{\frac{n-4}{n-2}}}\] for any $g$ in the conformal class of the round metric $g_{\mathbb{S}^n}$ with positive scalar curvature, with equality if and only if $g$ is also a metric with constant sectional curvature. With this inequality we introduce a new Yamabe constant $Y_{4,2}(M,[g_0])$ and prove the existence of the above problem provided that $Y_{4,2}(M,[g_0]) <Y_{4,2} (\mathbb{S}^n, [g_{\mathbb{S}^n}]).$ This strict inequality is proved if $(M,g)$ is not conformally equivalent to the round sphere. This follows from a crucial relation between $Y_{4,2}$ and the ordinary Yamabe constant $Y(M,[g_0])$, $Y_{4,2} (M, [g_0]) \le c(n) Y(M, [g_0])^{\frac n{n-2}}$ with equality if and only if $(M, g_0)$ is conformally equivalent to an Einstein manifold. Finally, we prove that on a closed $n$-dimensional Riemannian manifold $(M,g_{0})$ with semi-positive $Q$-curvature and non-negative scalar curvature, the above Yamabe problem is solvable, thanks to the maximum principle of Gursky-Malchiodi [33]. The proof for $n=3$ and $n=4$ follows closely the methods developed by Hang-Yang in [40], Gursky-Malchiodi in [33], and Chang-Yang in [12].

2603.15074

Mar 2026Differential Geometry

2603.09172

2603.09172Reinforced Generation of Combinatorial Structures: Ramsey Numbers

We present improved lower bounds for five classical Ramsey numbers: $\mathbf{R}(3, 13)$ is increased from $60$ to $61$, $\mathbf{R}(3, 18)$ from $99$ to $100$, $\mathbf{R}(4, 13)$ from $138$ to $139$, $\mathbf{R}(4, 14)$ from $147$ to $148$, and $\mathbf{R}(4, 15)$ from $158$ to $159$. These results were achieved using~\emph{AlphaEvolve}, an LLM-based code mutation agent. Beyond these new results, we successfully recovered lower bounds for all Ramsey numbers known to be exact, and matched the best known lower bounds across many other cases. These include bounds for which previous work does not detail the algorithms used. Virtually all known Ramsey lower bounds are derived computationally, with bespoke search algorithms each delivering a handful of results. AlphaEvolve is a single meta-algorithm yielding search algorithms for all of our results.

2603.09172

Mar 2026Combinatorics

2603.08404

2603.08404Instanton construction of the mapping cone Thom-Smale complex

The wedge by a smooth closed $\ell$-form induces the mapping cone de Rham cochain complex. This complex is quasi-isomorphic to the mapping cone Thom-Smale cochain complex. We give an instanton construction of the mapping cone Thom-Smale complex in this paper. More precisely, for a Morse function with the transversality condition on a closed oriented Riemannian manifold, we construct an instanton cochain complex using the eigenspaces of the mapping cone Laplacian deformed by the Morse function and two parameters. As the main result, we prove that our instanton complex is cochain isomorphic to the topologically constructed mapping cone Thom-Smale complex.

2603.08404

Mar 2026Differential Geometry

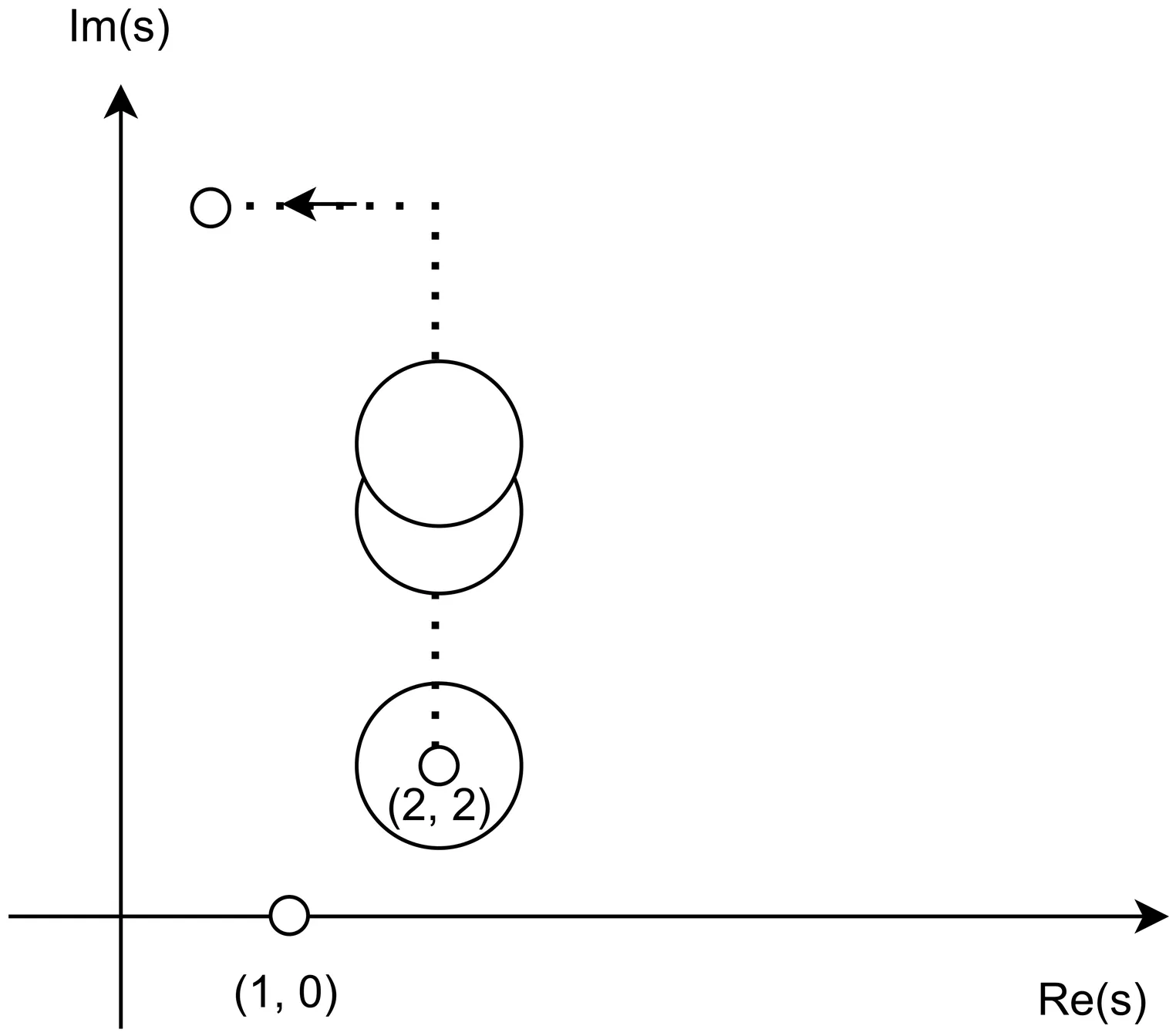

Analysis of the Riemann Zeta Function via Recursive Taylor Expansions

We present an unconditional proof that non-trivial zeros of the Riemann Zeta function must lie strictly on the critical line $\text{Re}(s) = 0.5$. By defining a recursive path of Taylor expansions originating from the domain of absolute convergence, we translate the zeta function towards the critical region, which is an easy-to-understand form of the analytical continuation. We then assume the existence of off-critical-line (off-line) zeros, which exist in pairs symmetric by the critical line. If the pairs are zero in value, their real and imaginary components differences should be both zero. However, we derive a contradiction against the assumption via basic logical deduction, proving the non-existence of the off-line zeros.

2603.05122

Mar 2026General Mathematics

2603.03684

2603.03684Mathematicians in the age of AI

Recent developments show that AI can prove research-level theorems in mathematics, both formally and informally. This essay urges mathematicians to stay up-to-date with the technology, to consider the ways it will disrupt mathematical practice, and to respond appropriately to the challenges and opportunities we now face.

2603.03684

Mar 2026History and Overview

Chromatic thresholds for linear equations and recurrence

Motivated by classical problems in extremal graph theory, we study a chromatic analogue of Roth-type questions for linear equations over $\mathbb F_p$. Given a homogeneous equation $\mathcal L:\sum_{i=1}^k c_i x_i=0$ with $k\ge 3$, we study $\mathcal L$-solution-free sets $A\subseteq \mathbb F_p$ through the chromatic number of the Cayley graph $\mathsf{Cay}(\mathbb F_p,A)$. We introduce the \emph{chromatic threshold} $δ_χ(\mathcal L)$, the minimum density that guarantees bounded chromatic number of $\mathsf{Cay}(\mathbb F_p,A)$ among all $\mathcal L$-solution-free sets $A$, and determine exactly when $δ_χ(\mathcal L)=0$. We prove that $δ_χ(\mathcal L)=0$ if and only if $\mathcal L$ contains a zero-sum subcollection of at least three coefficients. A key ingredient is a quantitative chromatic lower bound for Cayley graphs on $\mathbb Z_p^n$ generated by Hamming balls around the all-ones vector. This is obtained by introducing a new Kneser-type graph that admits a natural embedding into $\mathbb Z_p^n$, together with an equivariant Borsuk--Ulam type argument. As a consequence, we resolve a question of Griesmer. We further relate our classification to the hierarchy of measurable, topological, and Bohr recurrence. In particular, we show that every infinite discrete abelian group admits a set that is topological recurrent but not measurable recurrent, extending the seminal examples of Kříž and Ruzsa.

2603.05490

Mar 2026Combinatorics

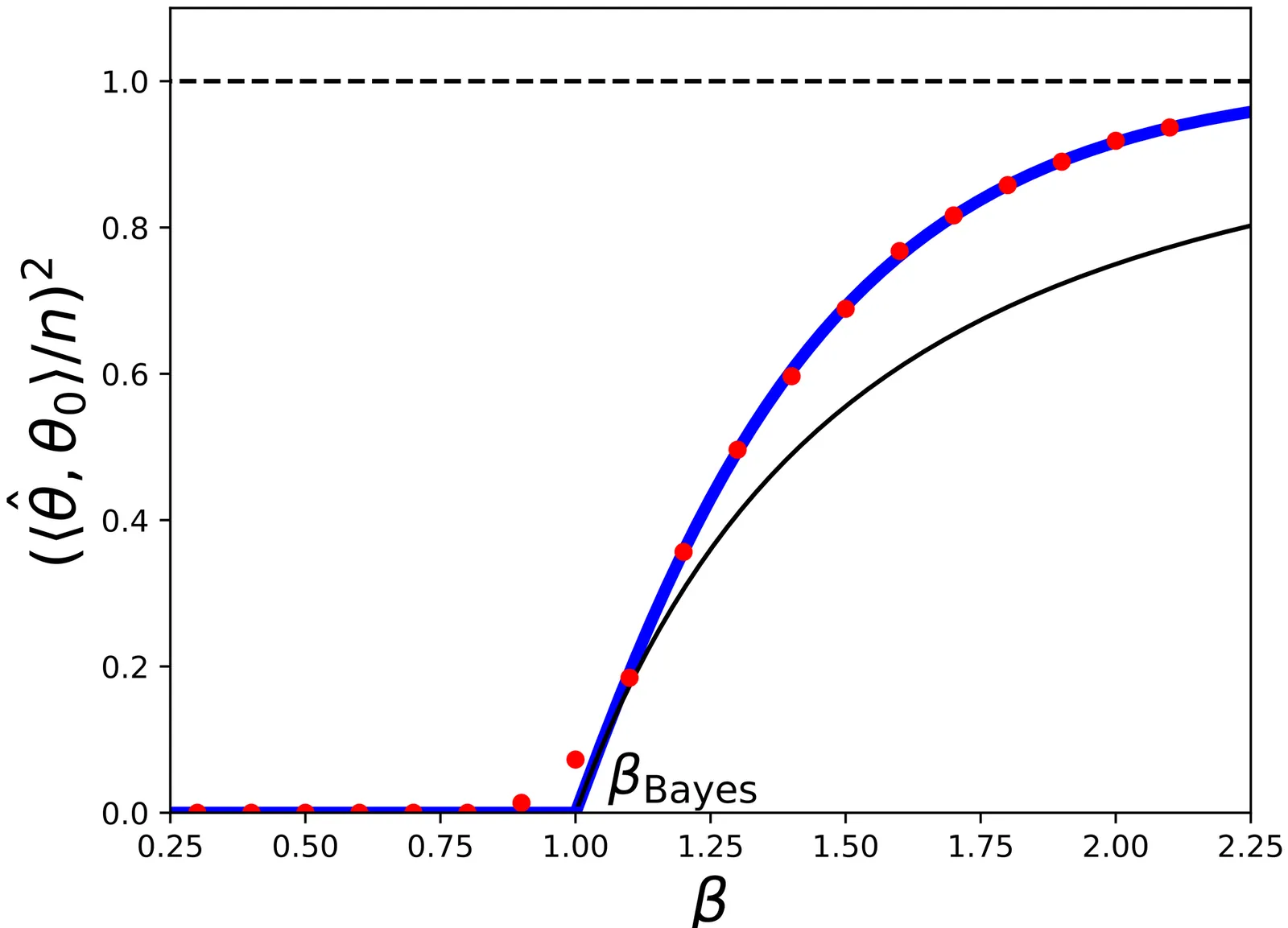

Spin Glass Concepts in Computer Science, Statistics, and Learning

Spin glass theory studies the structure of sublevel sets and minima (or near-minima) of certain classes of random functions in high dimension. Near-minima of random functions also play an important role in high-dimensional statistics and statistical learning, where minimizing the empirical risk (which is a random function of the model parameters) is the method of choice for learning a statistical model from noisy data. Finally, near-minima of random functions are obviously central to average-case analysis of optimization algorithms. Computer science, statistics, and machine learning naturally lead to questions that are traditionally not addressed within physics and mathematical physics. I will try to explain how ideas from spin glass theory have seeded recent developments in these fields. (This article was written on the occasion of the 2024 Abel Prize to Michel Talagrand.)

2602.23326

Feb 2026Probability

2602.16381

2602.16381Derivations as Algebras

Differential categories provide the categorical foundations for the algebraic approaches to differentiation. They have been successful in formalizing various important concepts related to differentiation, such as, in particular, derivations. In this paper, we show that the differential modality of a differential category lifts to a monad on the arrow category and, moreover, that the algebras of this monad are precisely derivations. Furthermore, in the presence of finite biproducts, the differential modality in fact lifts to a differential modality on the arrow category. In other words, the arrow category of a differential category is again a differential category. As a consequence, derivations also form a tangent category, and derivations on free algebras form a cartesian differential category.

2602.16381

Feb 2026Category Theory

HAL-MLE Log-Splines Density Estimation (Part I: Univariate)

We study nonparametric maximum likelihood estimation of probability densities under a total variation (TV) type penalty, sectional variation norm (also named as Hardy-Krause variation). TV regularization has a long history in regression and density estimation, including results on $L^2$ and KL divergence convergence rates. Here, we revisit this task using the Highly Adaptive Lasso (HAL) framework. We formulate a HAL-based maximum likelihood estimator (HAL-MLE) using the log-spline link function from \citet{kooperberg1992logspline}, and show that in the univariate setting the bounded sectional variation norm assumption underlying HAL coincides with the classical bounded TV assumption. This equivalence directly connects HAL-MLE to existing TV-penalized approaches such as local adaptive splines \citep{mammen1997locally}. We establish three new theoretical results: (i) the univariate HAL-MLE is asymptotically linear, (ii) it admits pointwise asymptotic normality, and (iii) it achieves uniform convergence at rate $n^{-(k+1)/(2k+3)}$ up to logarithmic factors for the smoothness order $k \geq 1$. These results extend existing results from \citet{van2017uniform}, which previously guaranteed only uniform consistency without rates when $k=0$. We will include the uniform convergence for general dimension $d$ in the follow-up work of this paper. The intention of this paper is to provide a unified framework for the TV-penalized density estimation methods, and to connect the HAL-MLE to the existing TV-penalized methods in the univariate case, despite that the general HAL-MLE is defined for multivariate cases.

2602.16259

Feb 2026Statistics Theory

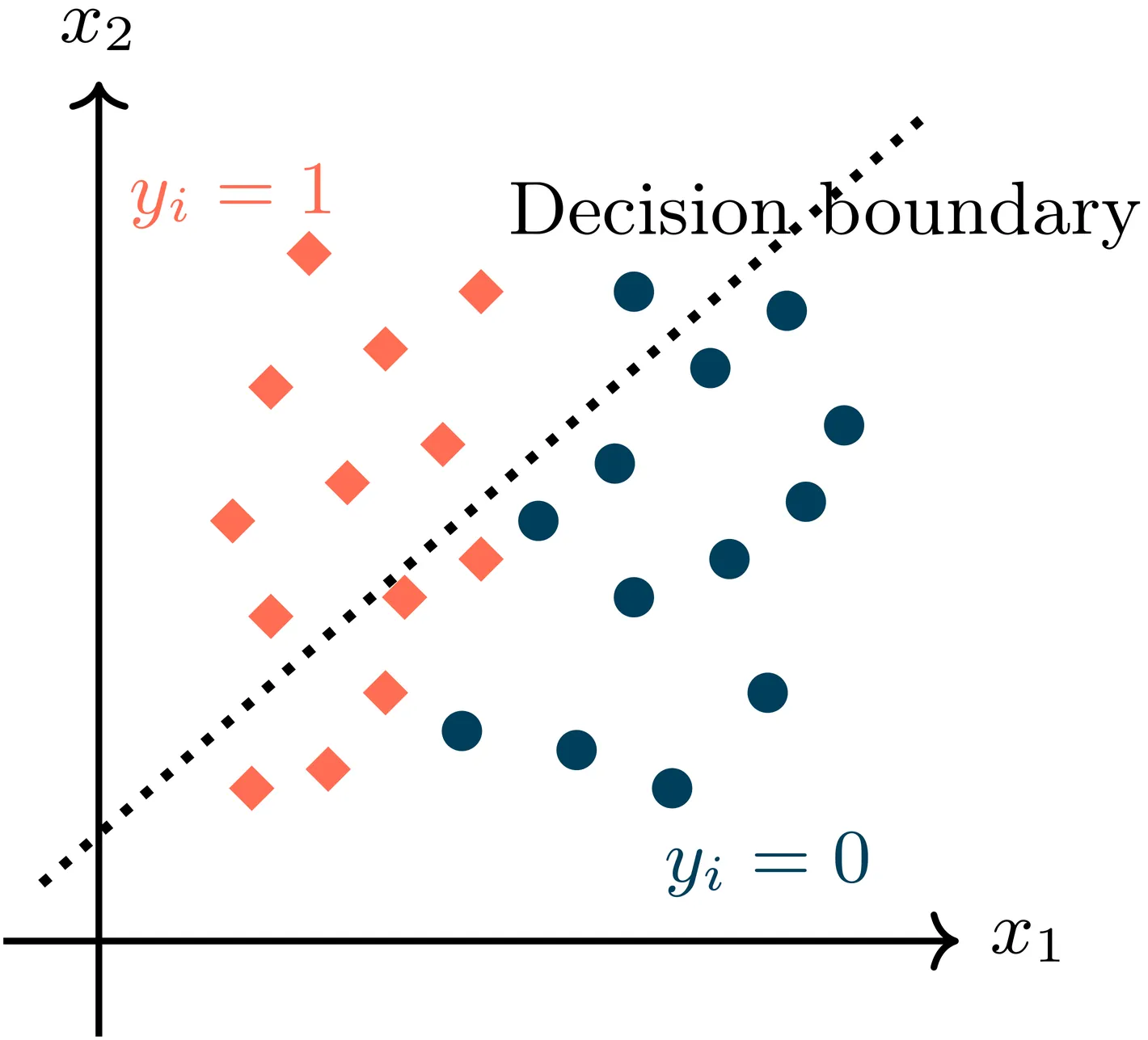

Do More Predictions Improve Statistical Inference? Filtered Prediction-Powered Inference

Recent advances in artificial intelligence have enabled the generation of large-scale, low-cost predictions with increasingly high fidelity. As a result, the primary challenge in statistical inference has shifted from data scarcity to data reliability. Prediction-powered inference methods seek to exploit such predictions to improve efficiency when labeled data are limited. However, existing approaches implicitly adopt a use-all philosophy, under which incorporating more predictions is presumed to improve inference. When prediction quality is heterogeneous, this assumption can fail, and indiscriminate use of unlabeled data may dilute informative signals and degrade inferential accuracy. In this paper, we propose Filtered Prediction-Powered Inference (FPPI), a framework that selectively incorporates predictions by identifying a data-adaptive filtered region in which predictions are informative for inference. We show that this region can be consistently estimated under a margin condition, achieving fast rates of convergence. By restricting the prediction-powered correction to the estimated filtered region, FPPI adaptively mitigates the impact of biased or noisy predictions. We establish that FPPI attains strictly improved asymptotic efficiency compared with existing prediction-powered inference methods. Numerical studies and a real-data application to large language model evaluation demonstrate that FPPI substantially reduces reliance on expensive labels by selectively leveraging reliable predictions, yielding accurate inference even in the presence of heterogeneous prediction quality.

2602.10464

Feb 2026Statistics Theory

2602.11747

2602.11747High-Probability Minimax Adaptive Estimation in Besov Spaces via Online-to-Batch

We study nonparametric regression over Besov spaces from noisy observations under sub-exponential noise, aiming to achieve minimax-optimal guarantees on the integrated squared error that hold with high probability and adapt to the unknown noise level. To this end, we propose a wavelet-based online learning algorithm that dynamically adjusts to the observed gradient noise by adaptively clipping it at an appropriate level, eliminating the need to tune parameters such as the noise variance or gradient bounds. As a by-product of our analysis, we derive high-probability adaptive regret bounds that scale with the $\ell_1$-norm of the competitor. Finally, in the batch statistical setting, we obtain adaptive and minimax-optimal estimation rates for Besov spaces via a refined online-to-batch conversion. This approach carefully exploits the structure of the squared loss in combination with self-normalized concentration inequalities.

2602.11747

Feb 2026Statistics Theory

2602.03716

2602.03716Fel's Conjecture on Syzygies of Numerical Semigroups

Let $S=\langle d_1,\dots,d_m\rangle$ be a numerical semigroup and $k[S]$ its semigroup ring. The Hilbert numerator of $k[S]$ determines normalized alternating syzygy power sums $K_p(S)$ encoding alternating power sums of syzygy degrees. Fel conjectured an explicit formula for $K_p(S)$, for all $p\ge 0$, in terms of the gap power sums $G_r(S)=\sum_{g\notin S} g^r$ and universal symmetric polynomials $T_n$ evaluated at the generator power sums $σ_k=\sum_i d_i^k$ (and $δ_k=(σ_k-1)/2^k$). We prove Fel's conjecture via exponential generating functions and coefficient extraction, solating the universal identities for $T_n$ needed for the derivation. The argument is fully formalized in Lean/Mathlib, and was produced automatically by AxiomProver from a natural-language statement of the conjecture.

2602.03716

Feb 2026Combinatorics

The Riemann Hypothesis: Past, Present and a Letter Through Time

This paper, commissioned as a survey of the Riemann Hypothesis, provides a comprehensive overview of 165 years of mathematical approaches to this fundamental problem, while introducing a new perspective that emerged during its preparation. The paper begins with a detailed description of what we know about the Riemann zeta function and its zeros, followed by an extensive survey of mathematical theories developed in pursuit of RH -- from classical analytic approaches to modern geometric and physical methods. We also discuss several equivalent formulations of the hypothesis. Within this survey framework, we present an original contribution in the form of a "Letter to Riemann," using only mathematics available in his time. This letter reveals a method inspired by Riemann's own approach to the conformal mapping theorem: by extremizing a quadratic form (restriction of Weil's quadratic form in modern language), we obtain remarkable approximations to the zeros of zeta. Using only primes less than 13, this optimization procedure yields approximations to the first 50 zeros with accuracies ranging from $2.6 \times 10^{-55}$ to $10^{-3}$. Moreover we prove a general result that these approximating values lie exactly on the critical line. Following the letter, we explain the underlying mathematics in modern terms, including the description of a deep connection of the Weil quadratic form with the world of information theory. The final sections develop a geometric perspective using trace formulas, outlining a potential proof strategy based on establishing convergence of zeros from finite to infinite Euler products. While completing the commissioned survey, these new results suggest a promising direction for future research on Riemann's conjecture.

2602.04022

Feb 2026Number Theory

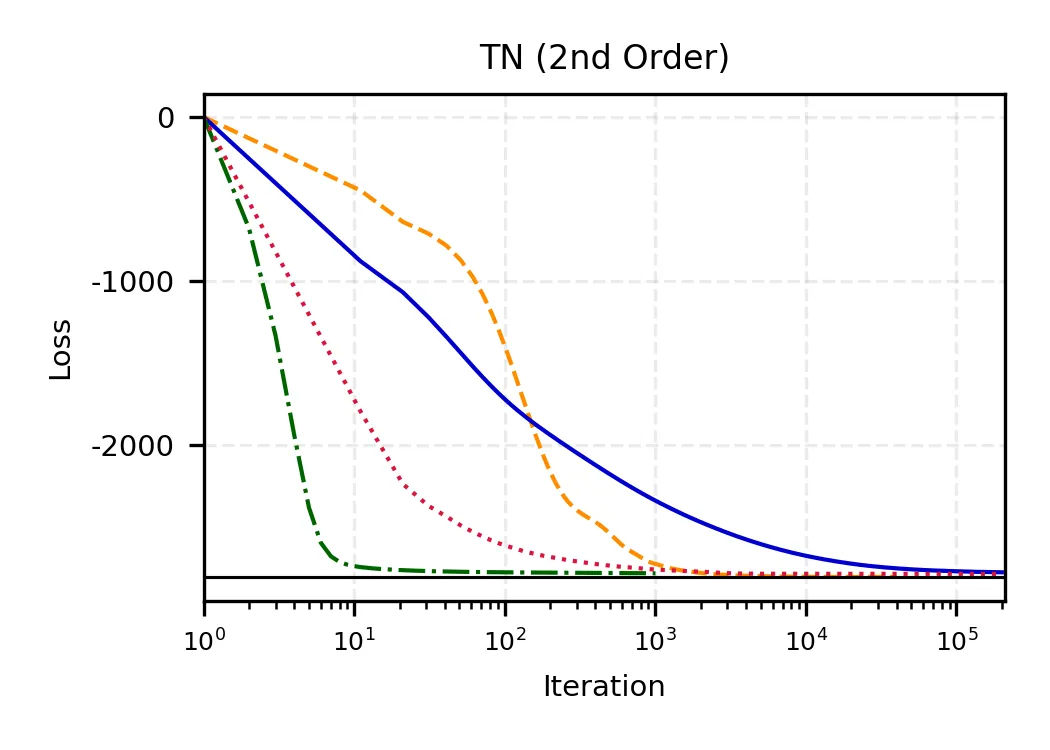

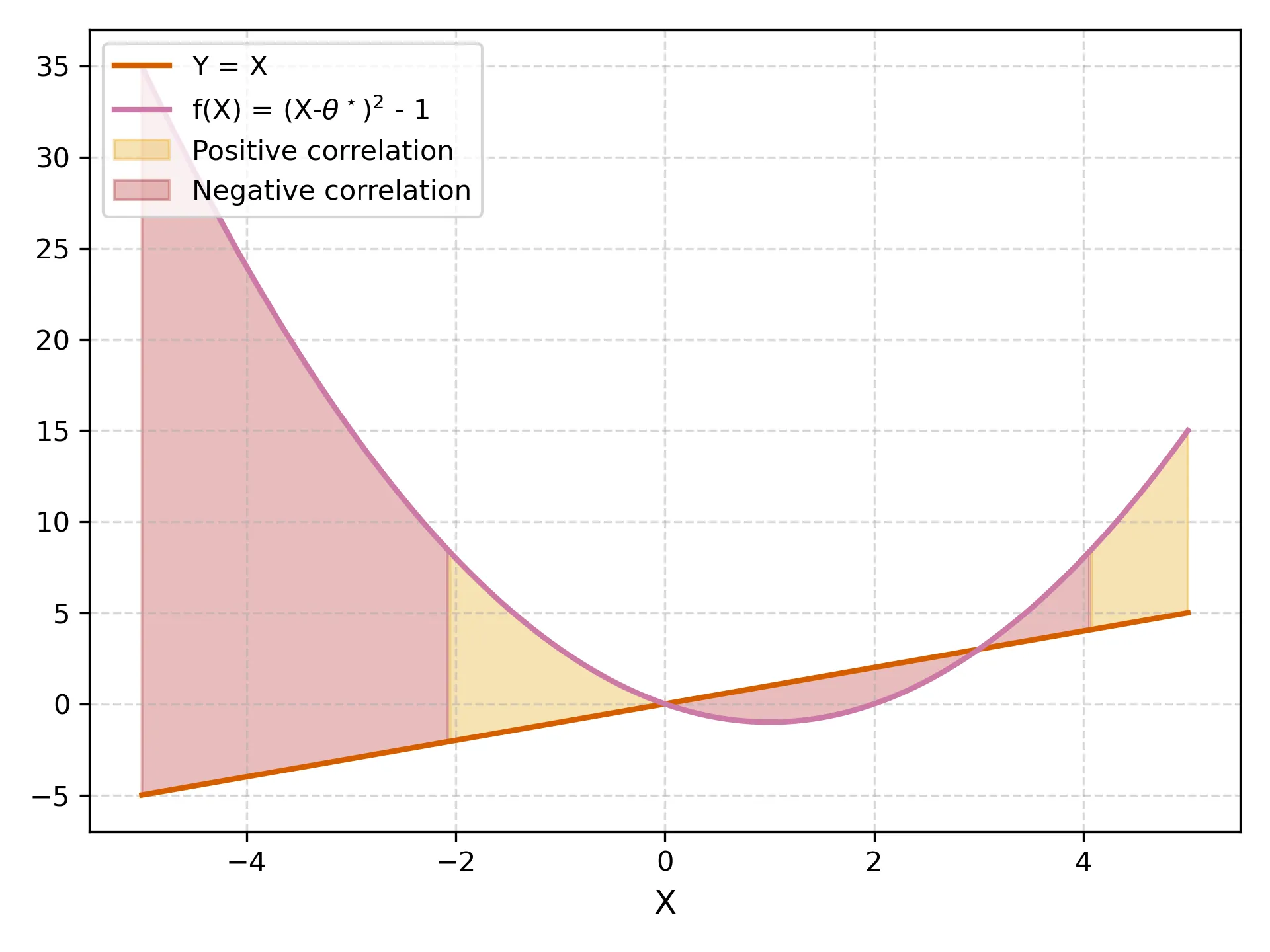

Introduction to optimization methods for training SciML models

Optimization is central to both modern machine learning (ML) and scientific machine learning (SciML), yet the structure of the underlying optimization problems differs substantially across these domains. Classical ML typically relies on stochastic, sample-separable objectives that favor first-order and adaptive gradient methods. In contrast, SciML often involves physics-informed or operator-constrained formulations in which differential operators induce global coupling, stiffness, and strong anisotropy in the loss landscape. As a result, optimization behavior in SciML is governed by the spectral properties of the underlying physical models rather than by data statistics, frequently limiting the effectiveness of standard stochastic methods and motivating deterministic or curvature-aware approaches. This document provides a unified introduction to optimization methods in ML and SciML, emphasizing how problem structure shapes algorithmic choices. We review first- and second-order optimization techniques in both deterministic and stochastic settings, discuss their adaptation to physics-constrained and data-driven SciML models, and illustrate practical strategies through tutorial examples, while highlighting open research directions at the interface of scientific computing and scientific machine learning.

2601.10222

Jan 2026Numerical Analysis

2601.07222

2601.07222The motivic class of the space of genus $0$ maps to the flag variety

Let $\operatorname{Fl}_{n+1}$ be the variety of complete flags in $\mathbb{A}^{n+1}$ and let $Ω^{2}_β(\operatorname{Fl}_{n+1})$ be the space of based maps $f:\mathbb{P}^{1}\to \operatorname{Fl}_{n+1}$ in the class $f_{*}[\mathbb{P}^{1}]=β$. We show that under a mild positivity condition on $β$, the class of $Ω^{2}_β(\operatorname{Fl}_{n+1})$ in $K_{0}(\operatorname{Var})$, the Grothendieck group of varieties, is given by \[ [Ω^{2}_β(\operatorname{Fl}_{n+1})] = [\operatorname{GL}_{n}\times \mathbb{A}^{a}]. \] The proof of this result was obtained in conjunction with Google Gemini and related tools. We briefly discuss this research interaction, which may be of independent interest. However, the treatment in this paper is entirely human-authored (aside from excerpts in an appendix which are clearly marked as such).

2601.07222

Jan 2026Algebraic Geometry

2601.07421

2601.07421Resolution of Erdős Problem #728: a writeup of Aristotle's Lean proof

We provide a writeup of a resolution of Erdős Problem #728; this is the first Erdos problem (a problem proposed by Paul Erdős which has been collected in the Erdos Problems website) regarded as fully resolved autonomously by an AI system. The system in question is a combination of GPT-5.2 Pro by OpenAI and Aristotle by Harmonic, operated by Kevin Barreto. The final result of the system is a formal proof written in Lean, which we translate to informal mathematics in the present writeup for wider accessibility. The proved result is as follows. We show a logarithmic-gap phenomenon regarding factorial divisibility: For any constants $0<C_1<C_2$ there exist infinitely many triples $(a,b,n)\in\mathbb N^3$ such that \[ a!\,b!\mid n!\,(a+b-n)!\qquad\text{and}\qquad C_1\log n < a+b-n < C_2\log n. \] The argument reduces this to a binomial divisibility $\binom{m+k}{k}\mid\binom{2m}{m}$ and studies it prime-by-prime. By Kummer's theorem, $ν_p\binom{2m}{m}$ translates into a carry count for doubling $m$ in base $p$. We then employ a counting argument to find, in each scale $[M,2M]$, an integer $m$ whose base-$p$ expansions simultaneously force many carries when doubling $m$, for every prime $p\le 2k$, while avoiding the rare event that one of $m+1,\dots,m+k$ is divisible by an unusually high power of $p$. These "carry-rich but spike-free" choices of $m$ force the needed $p$-adic inequalities and the divisibility. The overall strategy is similar to results regarding divisors of $\binom{2n}{n}$ studied earlier by Erdős and by Pomerance.

2601.07421

Jan 2026Number Theory

2601.06868

2601.06868Complex Analysis and Riemann Surfaces: A Graduate Path to Algebraic Geometry

These lecture notes present a computation driven pathway from classical complex analysis to the theory of compact Riemann surfaces and their connections to algebraic geometry. The exposition follows a compute first then abstract philosophy, in which analytic and geometric structures are introduced through explicit calculations and local models before being organized into conceptual frameworks. The notes begin with the foundations of complex analysis, including holomorphic functions, Cauchy theory, power series, residues, and contour integration, with an emphasis on hands on techniques such as Laurent expansions, residue calculus, and branch cut methods. These analytic tools are then used to construct Riemann surfaces explicitly via branched coverings and gluing constructions, which serve as recurring test cases throughout the text. Differential forms, Stokes theorem, curvature, and the Gauss Bonnet theorem provide the geometric bridge to Hodge theory, culminating in a detailed and self contained treatment of the Hodge Weyl theorem on compact Riemann surfaces, including weak formulations, regularity, and concrete examples. The algebraic geometric core develops holomorphic line bundles, divisors, the Picard group, and sheaves, followed by Cech and sheaf cohomology, the exponential sequence, and de Rham and Dolbeault theories, all treated with explicit computations. The Riemann Roch theorem is presented with full proofs and applications, leading to the construction of the Jacobian, Abel Jacobi theory, theta functions, and the correspondence between Riemann surfaces, algebraic curves, and Galois coverings. Originating from collaborative study groups associated with the Enjoying Math community, these notes are intended for graduate students seeking a concrete and unified route from complex analysis to algebraic geometry.

2601.06868

Jan 2026Complex Variables

2601.04934

2601.04934A classification of coadjoint orbits carrying Gibbs ensembles

A coadjoint orbit $O_λ\subseteq {\mathfrak g}^*$ of a Lie group $G$ is said to carry a Gibbs ensemble if the set of all $x \in {\mathfrak g}$, for which the function $α\mapsto e^{-α(x)}$ on the orbit is integrable with respect to the Liouville measure, has non-empty interior $Ω_λ$. We describe a classification of all coadjoint orbits of finite-dimensional Lie algebras with this property. In the context of Souriau's Lie group thermodynamics, the subset $Ω_λ$ is the geometric temperature, a parameter space for a family of Gibbs measures on the coadjoint orbit. The corresponding Fenchel--Legendre transform maps $Ω_λ/{\mathfrak z}({\mathfrak g})$ diffeomorphically onto the interior of the convex hull of the coadjoint orbit $O_λ$. This provides an interesting perspective on the underlying information geometry. We also show that already the integrability of $e^{-α(x)}$ for one $x \in {\mathfrak g}$ implies that $Ω_λ\not=\emptyset$ and that, for general Hamiltonian actions, the existence of Gibbs measures implies that the range of the momentum maps consists of coadjoint orbits $O_λ$ as above.

2601.04934

Jan 2026Symplectic Geometry