4,350 papers

An AI Teaching Assistant for Motion Picture Engineering

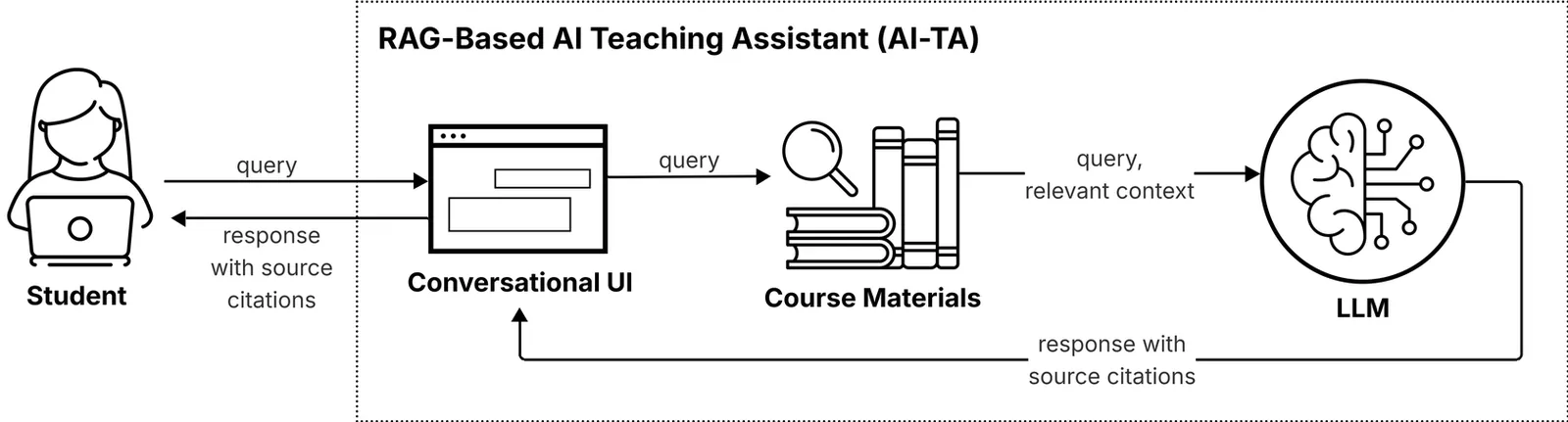

The rapid rise of LLMs over the last few years has promoted growing experimentation with LLM-driven AI tutors. However, the details of implementation, as well as the benefit in a teaching environment, are still in the early days of exploration. This article addresses these issues in the context of implementation of an AI Teaching Assistant (AI-TA) using Retrieval Augmented Generation (RAG) for Trinity College Dublin's Master's Motion Picture Engineering (MPE) course. We provide details of our implementation (including the prompt to the LLM, and code), and highlight how we designed and tuned our RAG pipeline to meet course needs. We describe our survey instrument and report on the impact of the AI-TA through a number of quantitative metrics. The scale of our experiment (43 students, 296 sessions, 1,889 queries over 7 weeks) was sufficient to have confidence in our findings. Unlike previous studies, we experimented with allowing the use of the AI-TA in open-book examinations. Statistical analysis across three exams showed no performance differences regardless of AI-TA access (p > 0.05), demonstrating that thoughtfully designed assessments can maintain academic validity. Student feedback revealed that the AI-TA was beneficial (mean = 4.22/5), while students had mixed feelings about preferring it over human tutoring (mean = 2.78/5).

2604.04670Apr 2026

View

TM-BSN: Triangular-Masked Blind-Spot Network for Real-World Self-Supervised Image Denoising

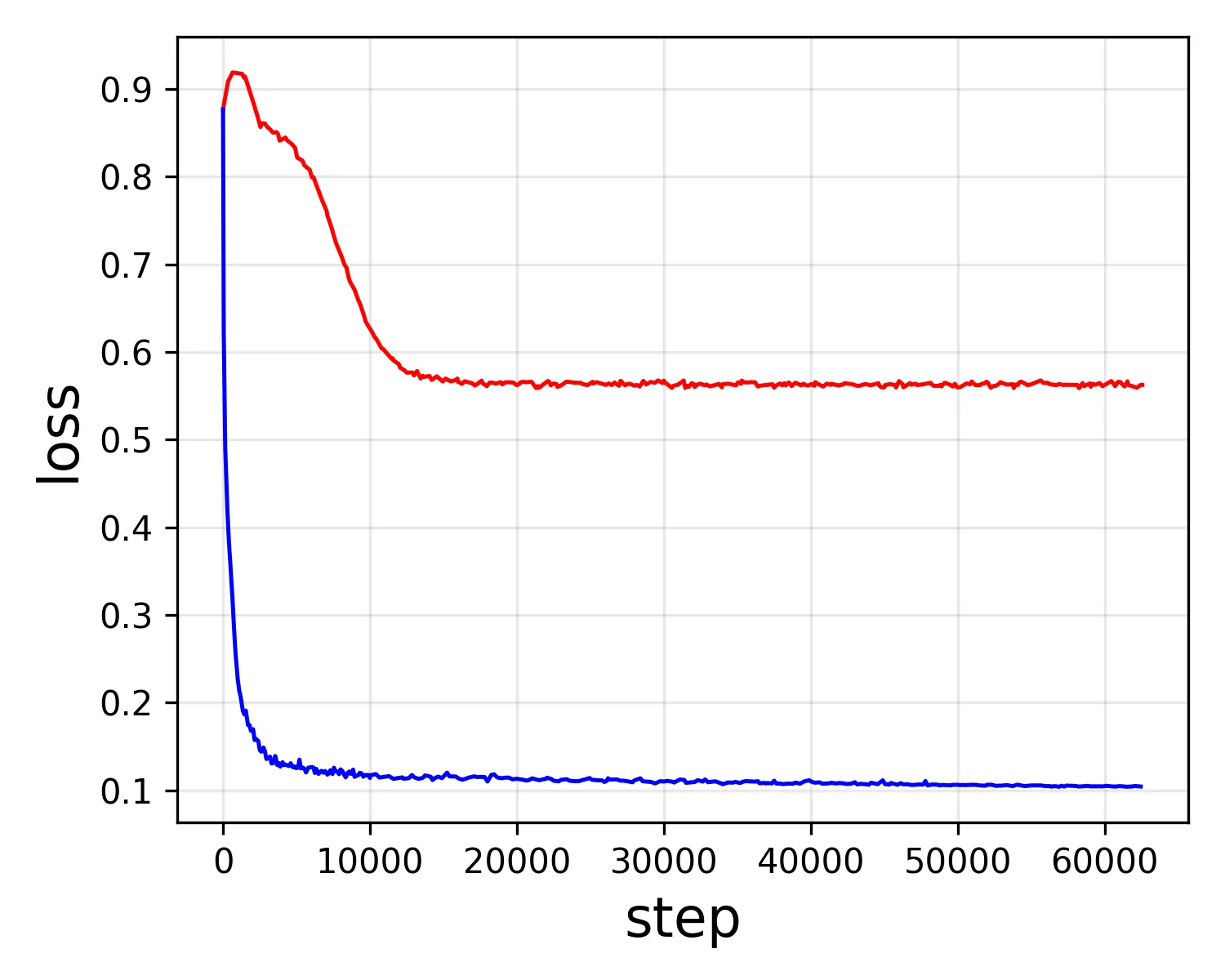

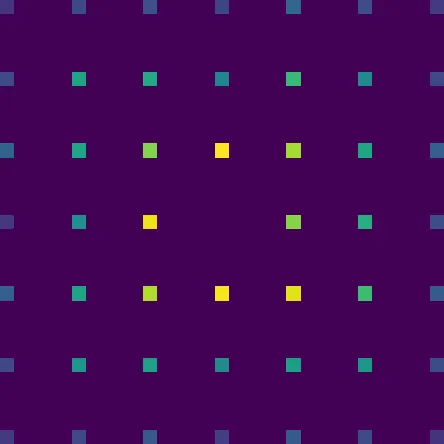

Blind-spot networks (BSNs) enable self-supervised image denoising by preventing access to the target pixel, allowing clean signal estimation without ground-truth supervision. However, this approach assumes pixel-wise noise independence, which is violated in real-world sRGB images due to spatially correlated noise from the camera's image signal processing (ISP) pipeline. While several methods employ downsampling to decorrelate noise, they alter noise statistics and limit the network's ability to utilize full contextual information. In this paper, we propose the Triangular-Masked Blind-Spot Network (TM-BSN), a novel blind-spot architecture that accurately models the spatial correlation of real sRGB noise. This correlation originates from demosaicing, where each pixel is reconstructed from neighboring samples with spatially decaying weights, resulting in a diamond-shaped pattern. To align the receptive field with this geometry, we introduce a triangular-masked convolution that restricts the kernel to its upper-triangular region, creating a diamond-shaped blind spot at the original resolution. This design excludes correlated pixels while fully leveraging uncorrelated context, eliminating the need for downsampling or post-processing. Furthermore, we use knowledge distillation to transfer complementary knowledge from multiple blind-spot predictions into a lightweight U-Net, improving both accuracy and efficiency. Extensive experiments on real-world benchmarks demonstrate that our method achieves state-of-the-art performance, significantly outperforming existing self-supervised approaches. Our code is available at https://github.com/parkjun210/TM-BSN.

2604.04484Apr 2026

View

NAIMA: Semantics Aware RGB Guided Depth Super-Resolution

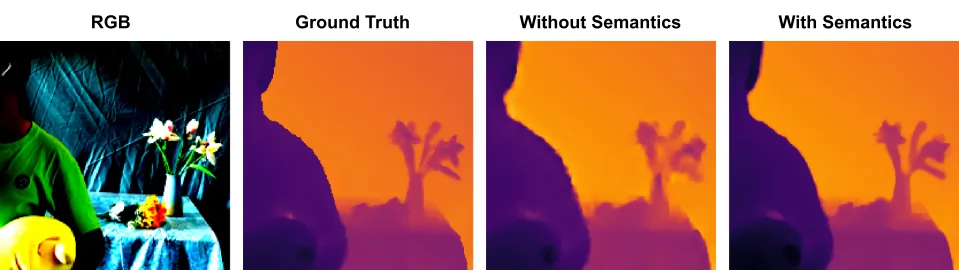

Guided depth super-resolution (GDSR) is a multi-modal approach for depth map super-resolution that relies on a low-resolution depth map and a high-resolution RGB image to restore finer structural details. However, the misleading color and texture cues indicating depth discontinuities in RGB images often lead to artifacts and blurred depth boundaries in the generated depth map. We propose a solution that introduces global contextual semantic priors, generated from pretrained vision transformer token embeddings. Our approach to distilling semantic knowledge from pretrained token embeddings is motivated by their demonstrated effectiveness in related monocular depth estimation tasks. We introduce a Guided Token Attention (GTA) module, which iteratively aligns encoded RGB spatial features with depth encodings, using cross-attention for selectively injecting global semantic context extracted from different layers of a pretrained vision transformer. Additionally, we present an architecture called Neural Attention for Implicit Multi-token Alignment (NAIMA), which integrates DINOv2 with GTA blocks for a semantics-aware GDSR. Our proposed architecture, with its ability to distill semantic knowledge, achieves significant improvements over existing methods across multiple scaling factors and datasets.

2604.04407Apr 2026

View

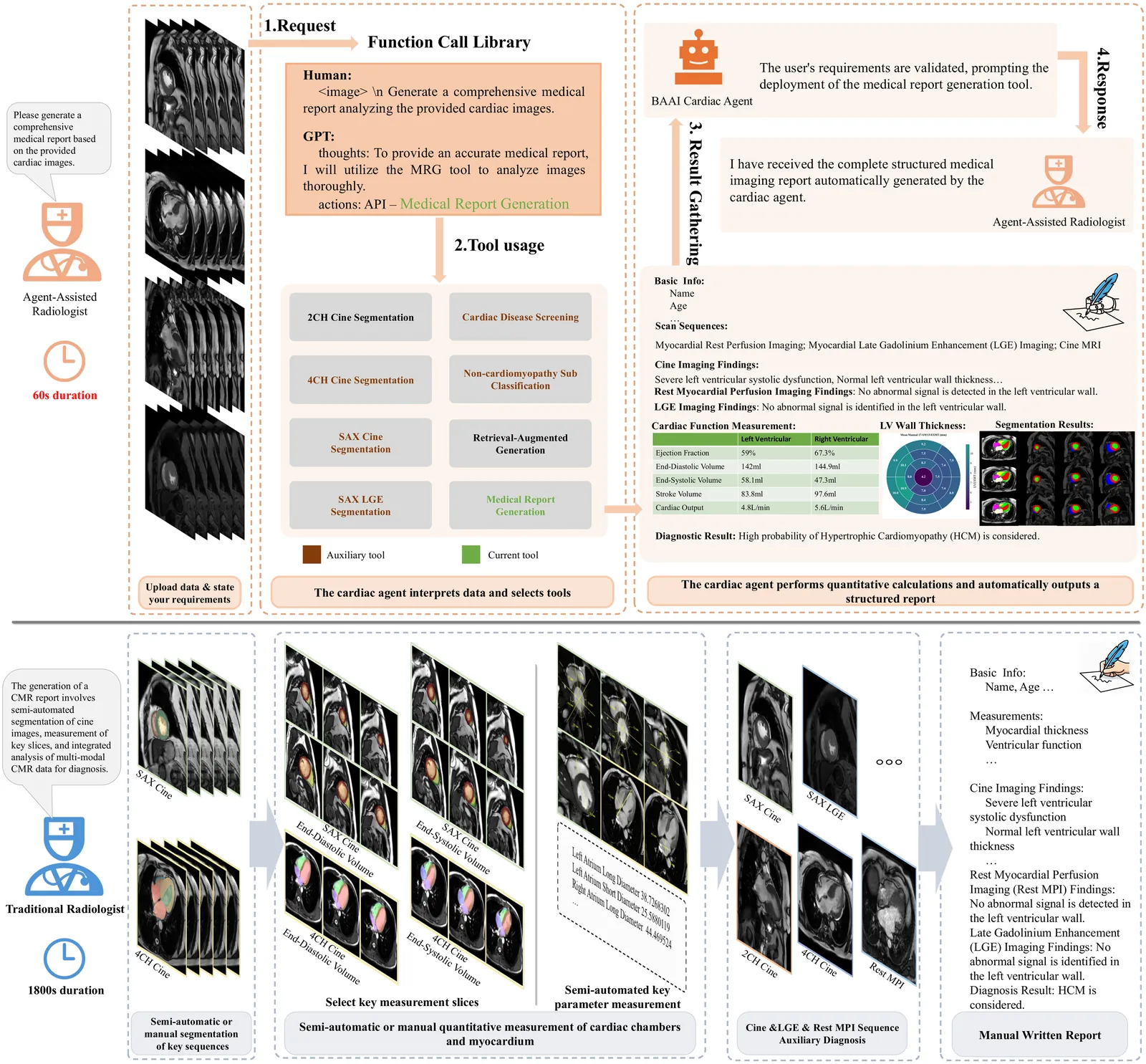

BAAI Cardiac Agent: An intelligent multimodal agent for automated reasoning and diagnosis of cardiovascular diseases from cardiac magnetic resonance imaging

Cardiac magnetic resonance (CMR) is a cornerstone for diagnosing cardiovascular disease. However, it remains underutilized due to complex, time-consuming interpretation across multi-sequences, phases, quantitative measures that heavily reliant on specialized expertise. Here, we present BAAI Cardiac Agent, a multimodal intelligent system designed for end-to-end CMR interpretation. The agent integrates specialized cardiac expert models to perform automated segmentation of cardiac structures, functional quantification, tissue characterization and disease diagnosis, and generates structured clinical reports within a unified workflow. Evaluated on CMR datasets from two hospitals (2413 patients) spanning 7-types of major cardiovascular diseases, the agent achieved an area under the receiver-operating-characteristic curve exceeding 0.93 internally and 0.81 externally. In the task of estimating left ventricular function indices, the results generated by this system for core parameters such as ejection fraction, stroke volume, and left ventricular mass are highly consistent with clinical reports, with Pearson correlation coefficients all exceeding 0.90. The agent outperformed state-of-the-art models in segmentation and diagnostic tasks, and generated clinical reports showing high concordance with expert radiologists (six readers across three experience levels). By dynamically orchestrating expert models for coordinated multimodal analysis, this agent framework enables accurate, efficient CMR interpretation and highlights its potentials for complex clinical imaging workflows. Code is available at https://github.com/plantain-herb/Cardiac-Agent.

2604.04078Apr 2026

View

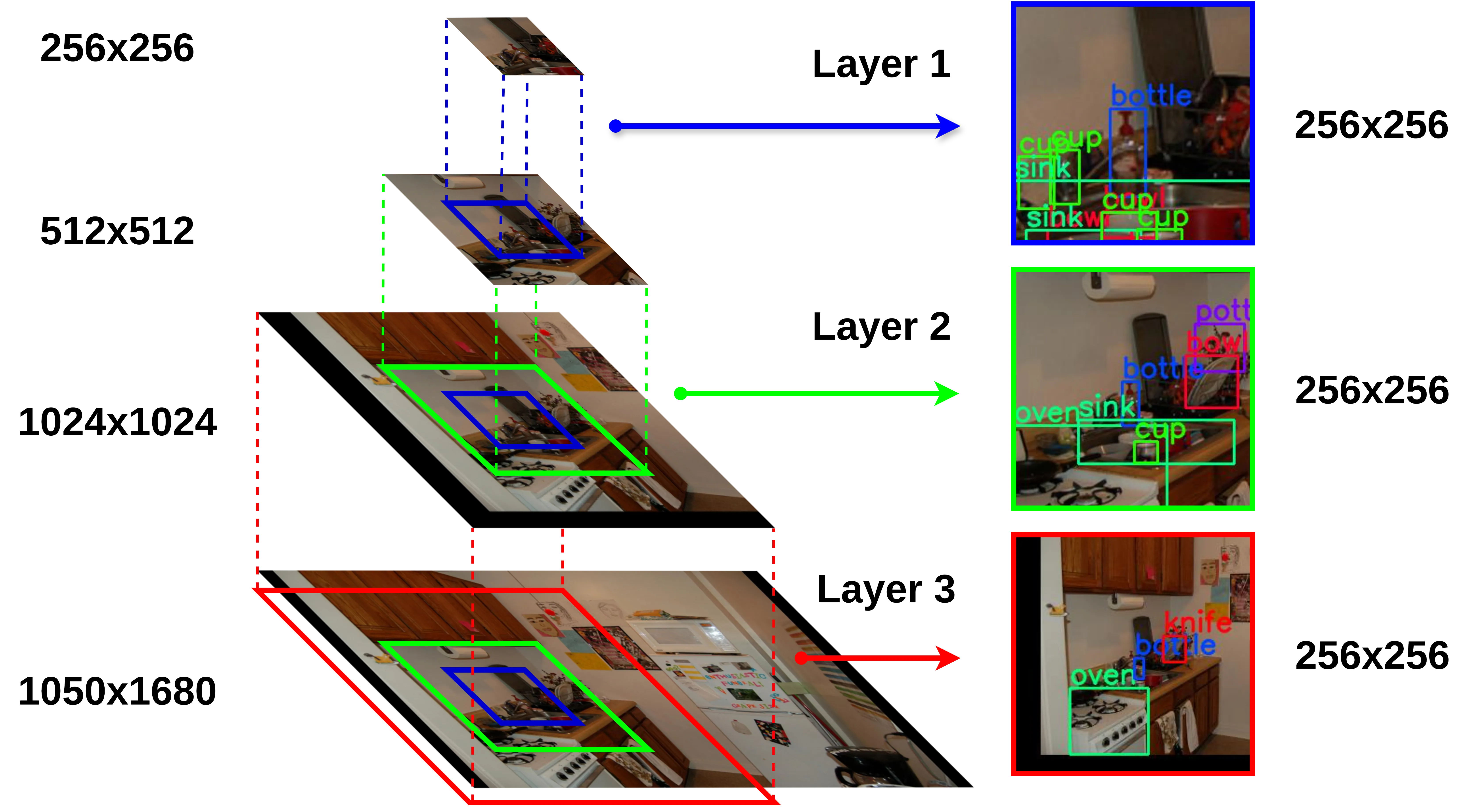

Cost-Efficient Multi-Scale Fovea for Semantic-Based Visual Search Attention

Semantics are one of the primary sources of top-down preattentive information. Modern deep object detectors excel at extracting such valuable semantic cues from complex visual scenes. However, the size of the visual input to be processed by these detectors can become a bottleneck, particularly in terms of time costs, affecting an artificial attention system's biological plausibility and real-time deployability. Inspired by classical exponential density roll-off topologies, we apply a new artificial foveation module to our novel attention prediction pipeline: the Semantic-based Bayesian Attention (SemBA) framework. We aim at reducing detection-related computational costs without compromising visual task accuracy, thereby making SemBA more biologically plausible. The proposed multi-scale pyramidal field-of-view retains maximum acuity at an innermost level, around a focal point, while gradually increasing distortion for outer levels to mimic peripheral uncertainty via downsampling. In this work we evaluate the performance of our novel Multi-Scale Fovea, incorporated into \textit{SemBA}, on target-present visual search. We also compare it against other artificial foveal systems, and conduct ablation studies with different deep object detection models to assess the impact of the new topology in terms of computational costs. We experimentally demonstrate that including the new Multi-Scale Fovea module effectively reduces inherent processing costs while improving SemBA's scanpath prediction accuracy. Remarkably, we show that SemBA closely approximates human consistency while retaining the actual human fovea's proportions.

2604.03836Apr 2026

View

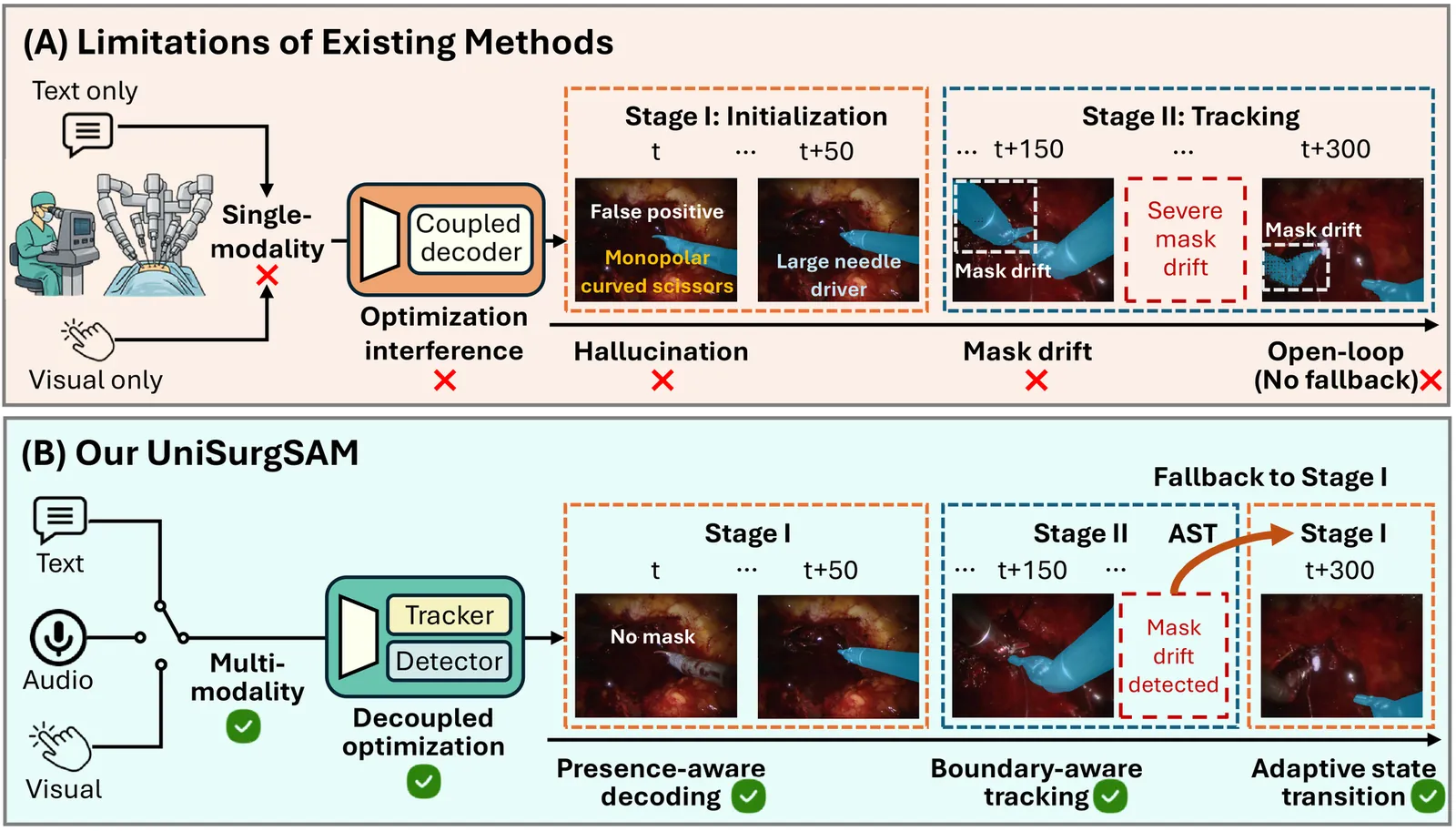

UniSurgSAM: A Unified Promptable Model for Reliable Surgical Video Segmentation

Surgical video segmentation is fundamental to computer-assisted surgery. In practice, surgeons need to dynamically specify targets throughout extended procedures, using heterogeneous cues such as visual selections, textual expressions, or audio instructions. However, existing Promptable Video Object Segmentation (PVOS) methods are typically restricted to a single prompt modality and rely on coupled frameworks that cause optimization interference between target initialization and tracking. Moreover, these methods produce hallucinated predictions when the target is absent and suffer from accumulated mask drift without failure recovery. To address these challenges, we present UniSurgSAM, a unified PVOS model enabling reliable surgical video segmentation through visual, textual, or audio prompts. Specifically, UniSurgSAM employs a decoupled two-stage framework that independently optimizes initialization and tracking to resolve the optimization interference. Within this framework, we introduce three key designs for reliability: presence-aware decoding that models target absence to suppress hallucinations; boundary-aware long-term tracking that prevents mask drift over extended sequences; and adaptive state transition that closes the loop between stages for failure recovery. Furthermore, we establish a multi-modal and multi-granular benchmark from four public surgical datasets with precise instance-level masklets. Extensive experiments demonstrate that UniSurgSAM achieves state-of-the-art performance in real time across all prompt modalities and granularities, providing a practical foundation for computer-assisted surgery. Code and datasets will be available at https://jinlab-imvr.github.io/UniSurgSAM.

2604.03645Apr 2026

View

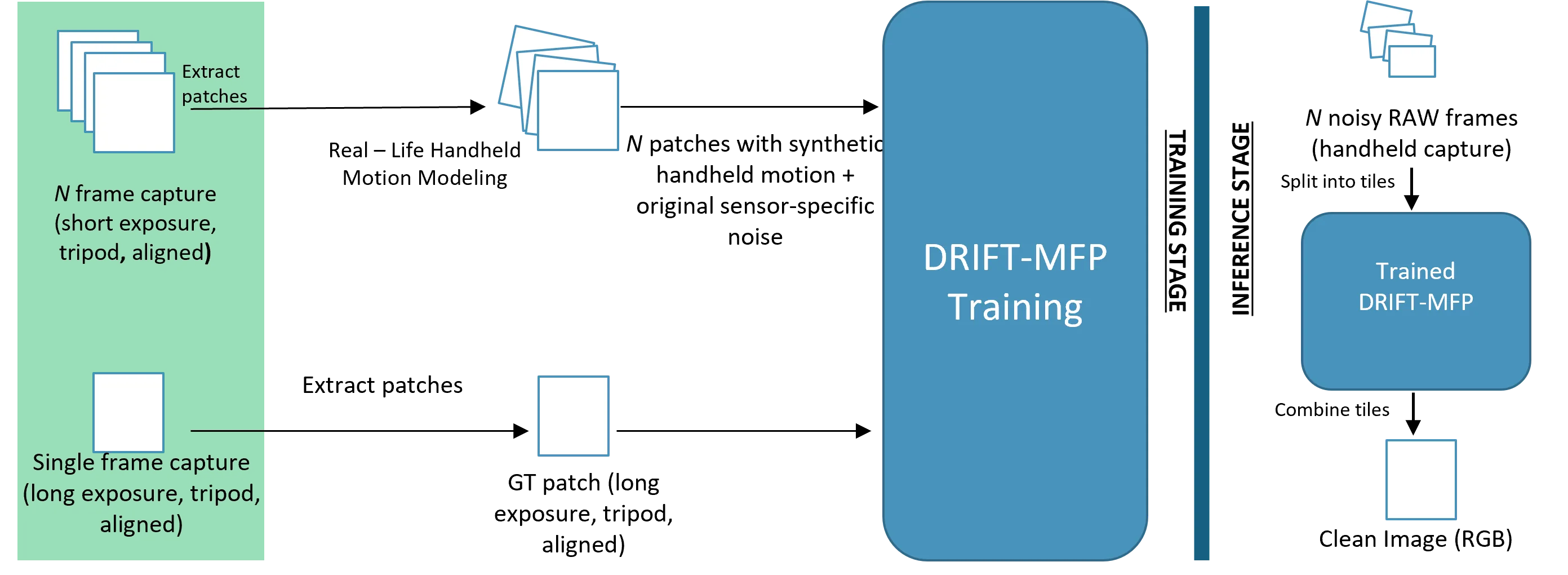

DRIFT: Deep Restoration, ISP Fusion, and Tone-mapping

Smartphone cameras have gained immense popularity with the adoption of high-resolution and high-dynamic range imaging. As a result, high-performance camera Image Signal Processors (ISPs) are crucial in generating high-quality images for the end user while keeping computational costs low. In this paper, we propose DRIFT (Deep Restoration, ISP Fusion, and Tone-mapping): an efficient AI mobile camera pipeline that generates high quality RGB images from hand-held raw captures. The first stage of DRIFT is a Multi-Frame Processing (MFP) network that is trained using a adversarial perceptual loss to perform multi-frame alignment, denoising, demosaicing, and super-resolution. Then, the output of DRIFT-MFP is processed by a novel deep-learning based tone-mapping (DRIFT-TM) solution that allows for tone tunability, ensures tone-consistency with a reference pipeline, and can be run efficiently for high-resolution images on a mobile device. We show qualitative and quantitative comparisons against state-of-the-art MFP and tone-mapping methods to demonstrate the effectiveness of our approach.

2604.03402Apr 2026

View

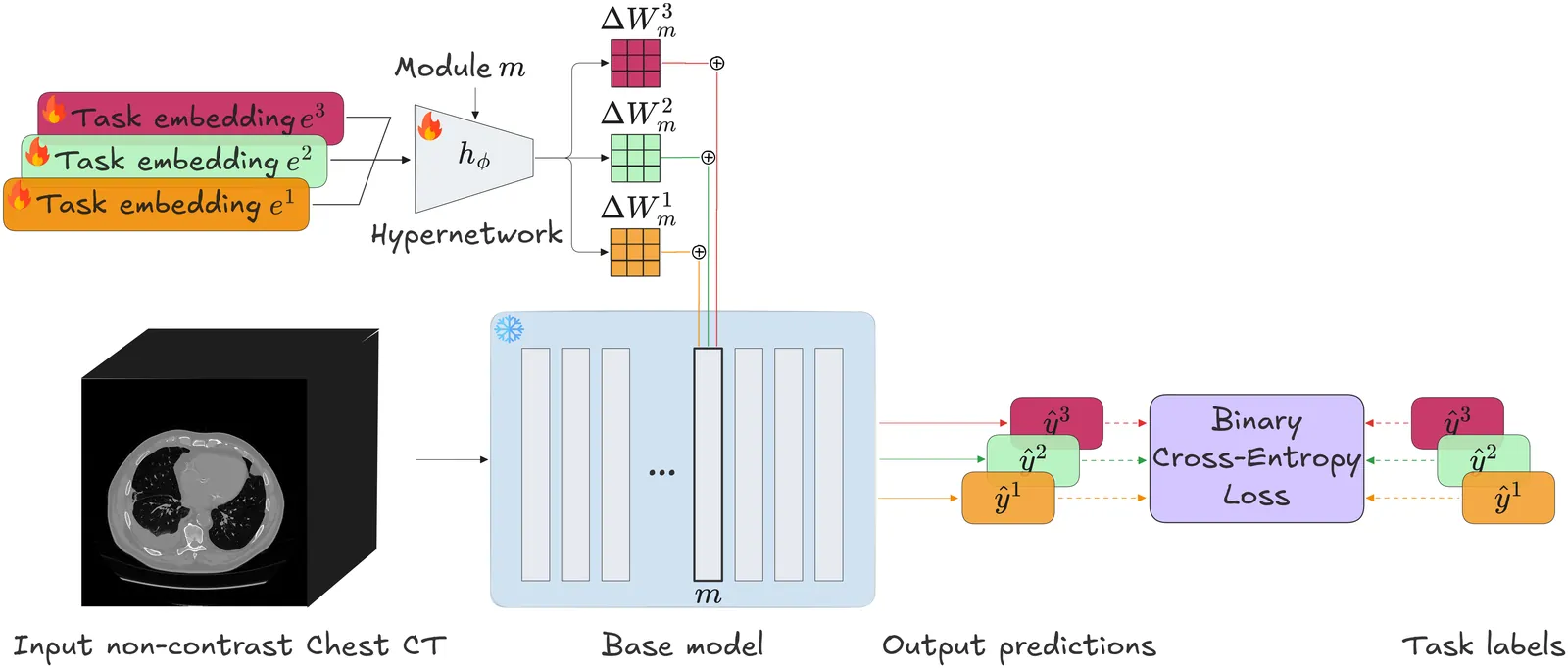

HyperCT: Low-Rank Hypernet for Unified Chest CT Analysis

Non-contrast chest CTs offer a rich opportunity for both conventional pulmonary and opportunistic extra-pulmonary screening. While Multi-Task Learning (MTL) can unify these diverse tasks, standard hard-parameter sharing approaches are often suboptimal for modeling distinct pathologies. We propose HyperCT, a framework that dynamically adapts a Vision Transformer backbone via a Hypernetwork. To ensure computational efficiency, we integrate Low-Rank Adaptation (LoRA), allowing the model to regress task-specific low-rank weight updates rather than full parameters. Validated on a large-scale dataset of radiological and cardiological tasks, \method{} outperforms various strong baselines, offering a unified, parameter-efficient solution for holistic patient assessment. Our code is available at https://github.com/lfb-1/HyperCT.

2604.03224Apr 2026

ViewNeuralLVC: Neural Lossless Video Compression via Masked Diffusion with Temporal Conditioning

While neural lossless image compression has advanced significantly with learned entropy models, lossless video compression remains largely unexplored in the neural setting. We present NeuralLVC, a neural lossless video codec that combines masked diffusion with an I/P-frame architecture for exploiting temporal redundancy. Our I-frame model compresses individual frames using bijective linear tokenization that guarantees exact pixel reconstruction. The P-frame model compresses temporal differences between consecutive frames, conditioned on the previous decoded frame via a lightweight reference embedding that adds only 1.3% trainable parameters. Group-wise decoding enables controllable speed-compression trade-offs. Our codec is lossless in the input domain: for video, it reconstructs YUV420 planes exactly; for image evaluation, RGB channels are reconstructed exactly. Experiments on 9 Xiph CIF sequences show that NeuralLVC outperforms H.264 and H.265 lossless by a significant margin. We verify exact reconstruction through end-to-end encode-decode testing with arithmetic coding. These results suggest that masked diffusion with temporal conditioning is a promising direction for neural lossless video compression.

2604.03353Apr 2026

View

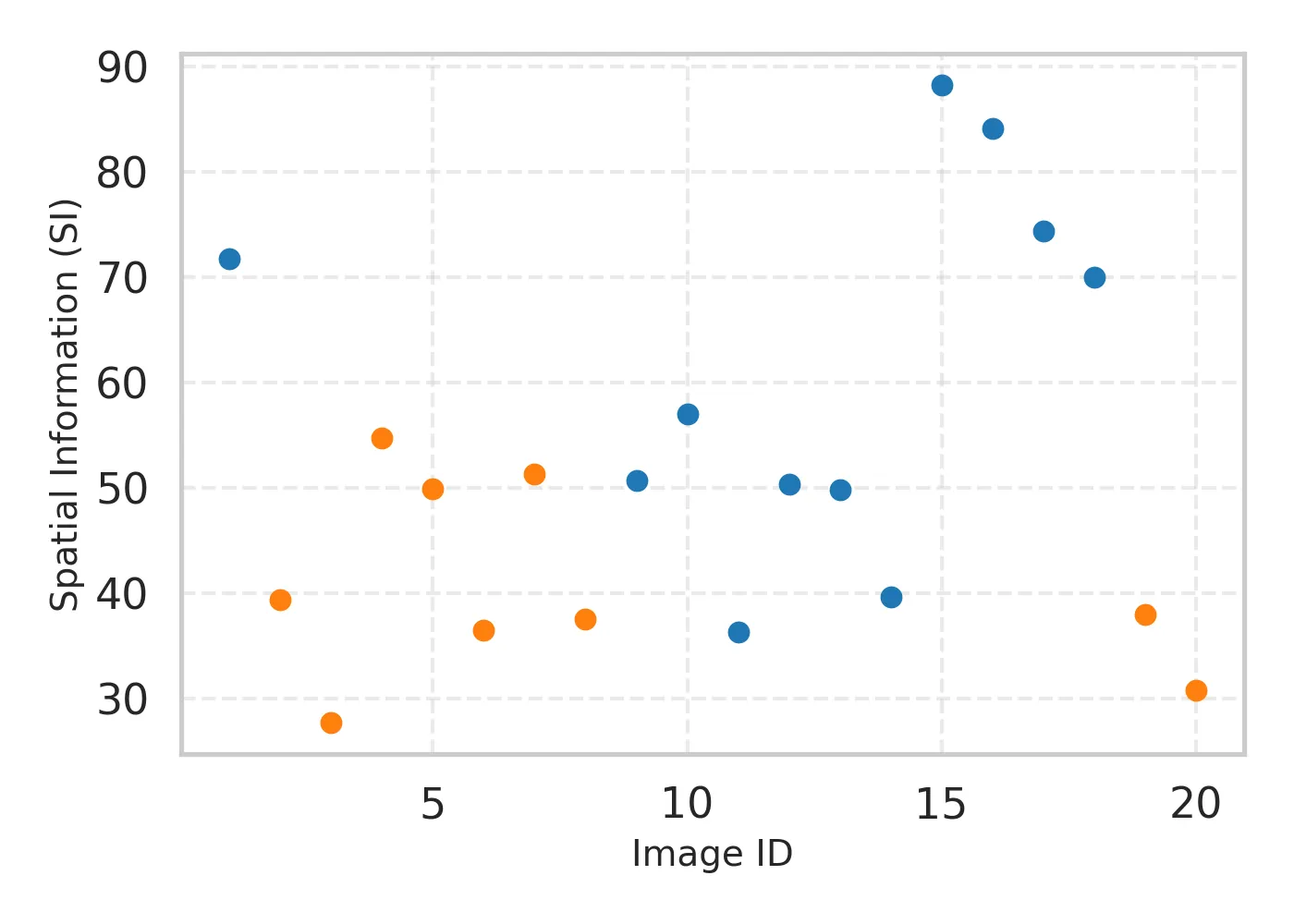

ARIQA-3DS: A Stereoscopic Image Quality Assessment Dataset for Realistic Augmented Reality

As Augmented Reality (AR) technologies advance towards immersive consumer adoption, the need for rigorous Quality of Experience (QoE) assessment becomes critical. However, existing datasets often lack ecological validity, relying on monocular viewing or simplified backgrounds that fail to capture the complex perceptual interplay, termed visual confusion, between real and virtual layers. To address this gap, we present ARIQA-3DS, the first large stereoscopic AR Image Quality Assessment dataset. Comprising 1,200 AR viewports, the dataset fuses high-resolution stereoscopic omnidirectional captures of real-world scenes with diverse augmented foregrounds under controlled transparency and degradation conditions. We conducted a comprehensive subjective study with 36 participants using a video see-through head-mounted display, collecting both quality ratings and simulator-sickness indicators. Our analysis reveals that perceived quality is primarily driven by foreground degradations and modulated by transparency levels, while oculomotor and disorientation symptoms show a progressive but manageable increase during viewing. ARIQA-3DS will be publicly released to serve as a comprehensive benchmark for developing next-generation AR quality assessment models.

2604.03112Apr 2026

View

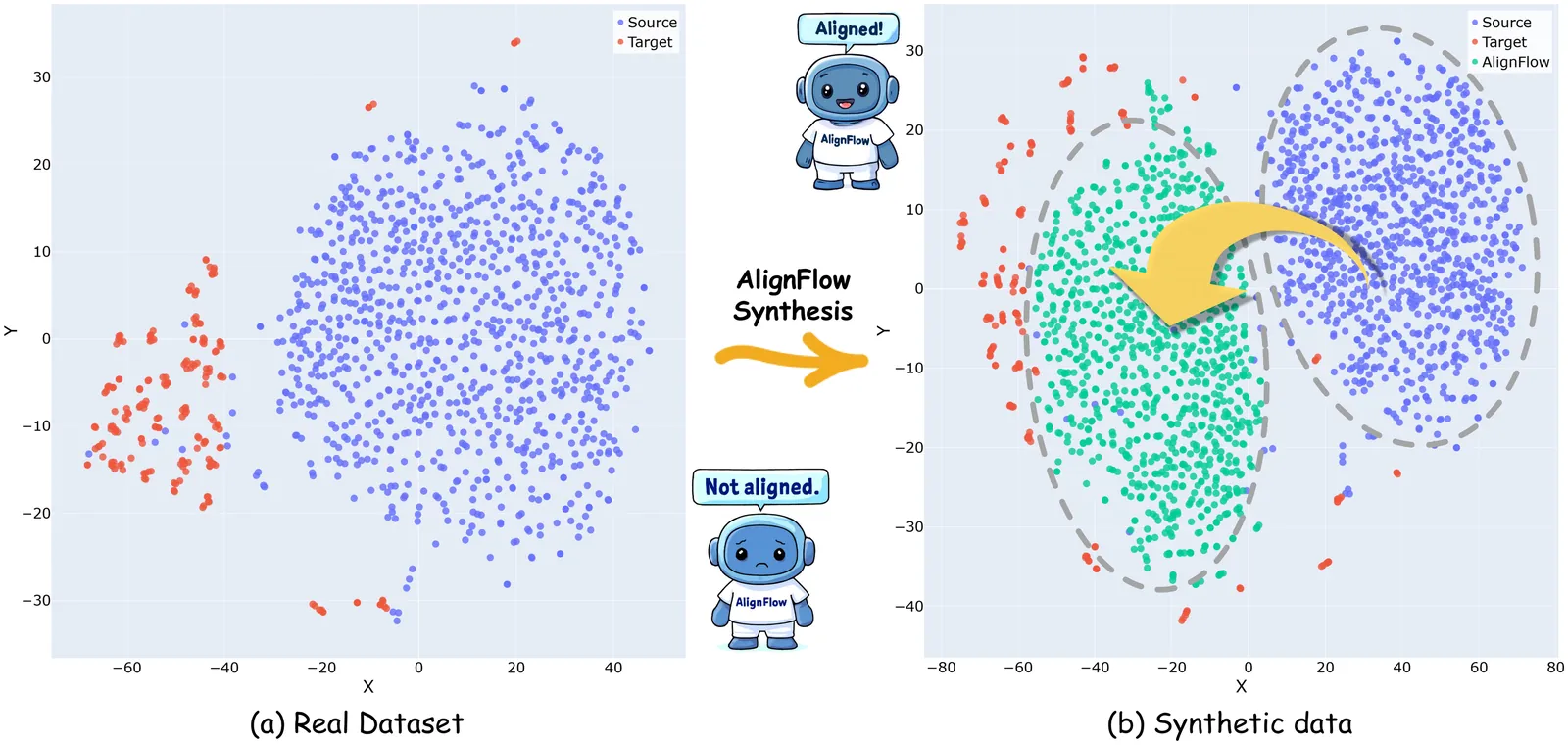

Few-Shot Distribution-Aligned Flow Matching for Data Synthesis in Medical Image Segmentation

Data heterogeneity hinders clinical deployment of medical image analysis models, and generative data augmentation helps mitigate this issue. However, recent diffusion-based methods that synthesize image-mask pairs often ignore distribution shifts between generated and real images across scenarios, and such mismatches can markedly degrade downstream performance. To address this issue, we propose AlignFlow, a flow matching model that aligns with the target reference image distribution via differentiable reward fine-tuning, and remains effective even when only a small number of reference images are provided. Specifically, we divide the training of the flow matching model into two stages: in the first stage, the model fits the training data to generate plausible images; Then, we introduce a distribution alignment mechanism and employ differentiable reward to steer the generated images toward the distribution of the given samples from the target domain. In addition, to enhance the diversity of generated masks, we also design a flow matching based mask generation to complement the diversity in regions of interest. Extensive experiments demonstrate the effectiveness of our approach, i.e., performance improvement by 3.5-4.0% in mDice and 3.5-5.6% in mIoU across a variety of datasets and scenarios.

2604.02868Apr 2026

View

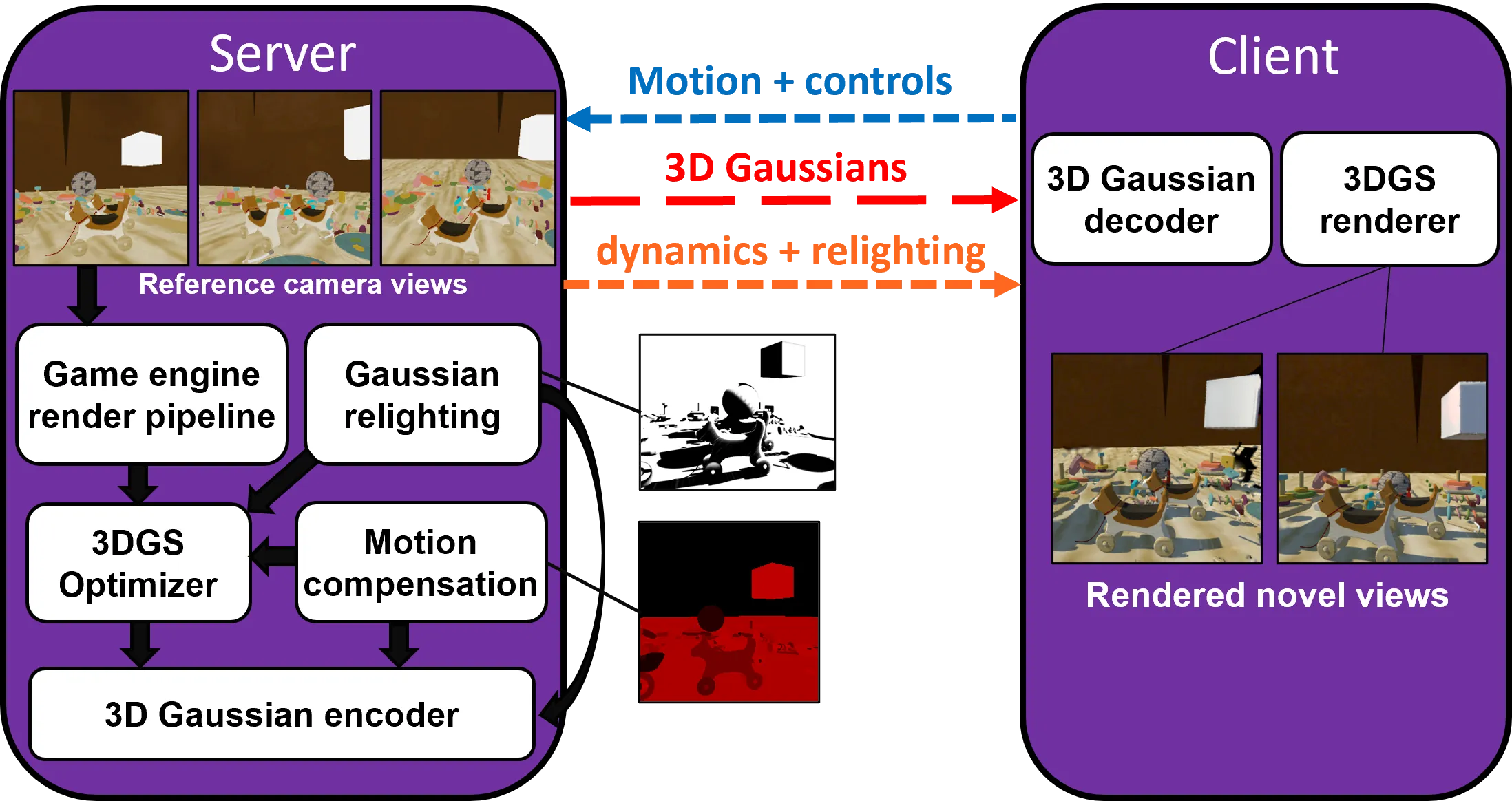

Streaming Real-Time Rendered Scenes as 3D Gaussians

Cloud rendering is widely used in gaming and XR to overcome limited client-side GPU resources and to support heterogeneous devices. Existing systems typically deliver the rendered scene as a 2D video stream, which tightly couples the transmitted content to the server-rendered viewpoint and limits latency compensation to image-space reprojection or warping. In this paper, we investigate an alternative approach based on streaming a live 3D Gaussian Splatting (3DGS) scene representation instead of only rendered video. We present a Unity-based prototype in which a server constructs and continuously optimizes a 3DGS model from real-time rendered reference views, while streaming the evolving representation to remote clients using full model snapshots and incremental updates supporting relighting and rigid object dynamics. The clients reconstruct the streamed Gaussian model locally and render their current viewpoint from the received representation. This approach aims to improve viewpoint flexibility for latency compensation and to better amortize server-side scene modeling across multiple users than per-user rendering and video streaming. We describe the system design, evaluate it, and compare it with conventional image warping.

2604.02851Apr 2026

View

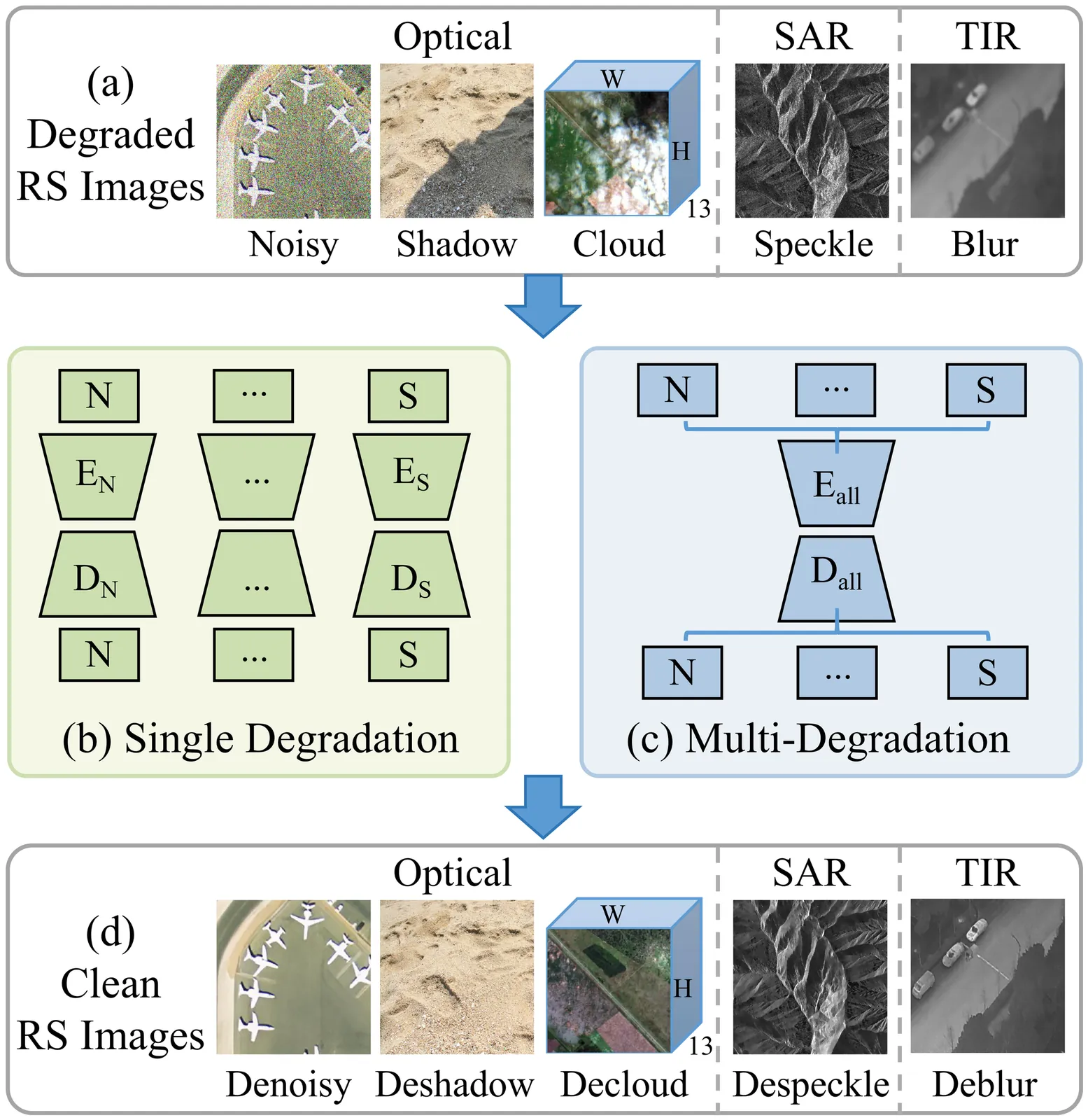

Task-Guided Prompting for Unified Remote Sensing Image Restoration

Remote sensing image restoration (RSIR) is essential for recovering high-fidelity imagery from degraded observations, enabling accurate downstream analysis. However, most existing methods focus on single degradation types within homogeneous data, restricting their practicality in real-world scenarios where multiple degradations often across diverse spectral bands or sensor modalities, creating a significant operational bottleneck. To address this fundamental gap, we propose TGPNet, a unified framework capable of handling denoising, cloud removal, shadow removal, deblurring, and SAR despeckling within a single, unified architecture. The core of our framework is a novel Task-Guided Prompting (TGP) strategy. TGP leverages learnable, task-specific embeddings to generate degradation-aware cues, which then hierarchically modulate features throughout the decoder. This task-adaptive mechanism allows the network to precisely tailor its restoration process for distinct degradation patterns while maintaining a single set of shared weights. To validate our framework, we construct a unified RSIR benchmark covering RGB, multispectral, SAR, and thermal infrared modalities for five aforementioned restoration tasks. Experimental results demonstrate that TGPNet achieves state-of-the-art performance on both unified multi-task scenarios and unseen composite degradations, surpassing even specialized models in individual domains such as cloud removal. By successfully unifying heterogeneous degradation removal within a single adaptive framework, this work presents a significant advancement for multi-task RSIR, offering a practical and scalable solution for operational pipelines. The code and benchmark will be released at https://github.com/huangwenwenlili/TGPNet.

2604.02742Apr 2026

View

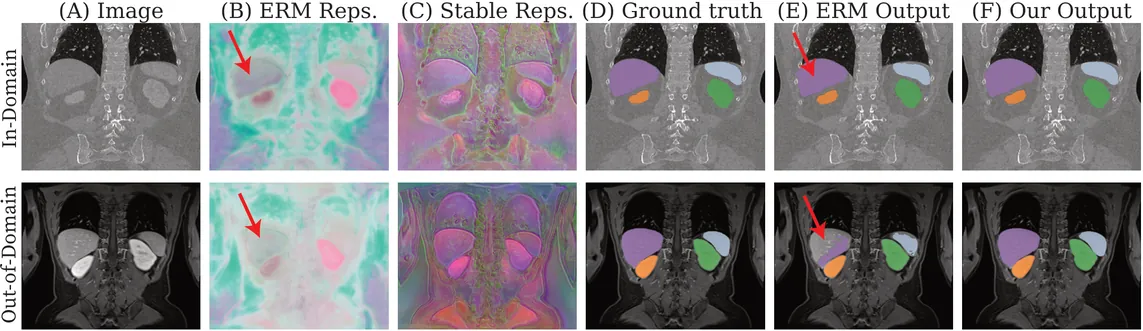

Why Invariance is Not Enough for Biomedical Domain Generalization and How to Fix It

We present DropGen, a simple and theoretically-grounded approach for domain generalization in 3D biomedical image segmentation. Modern segmentation models degrade sharply under shifts in modality, disease severity, clinical sites, and other factors, creating brittle models that limit reliable deployment. Existing domain generalization methods rely on extreme augmentations, mixing domain statistics, or architectural redesigns, yet incur significant implementation overhead and yield inconsistent performance across biomedical settings. DropGen instead proposes a principled learning strategy with minimal overhead that leverages both source-domain image intensities and domain-stable foundation model representations to train robust segmentation models. As a result, DropGen achieves strong gains in both fully supervised and few-shot segmentation across a broad range of shifts in biomedical studies. Unlike prior approaches, DropGen is architecture- and loss-agnostic, compatible with standard augmentation pipelines, computationally lightweight, and tackles arbitrary anatomical regions. Our implementation is freely available at https://github.com/sebodiaz/DropGen.

2604.02564Apr 2026

View

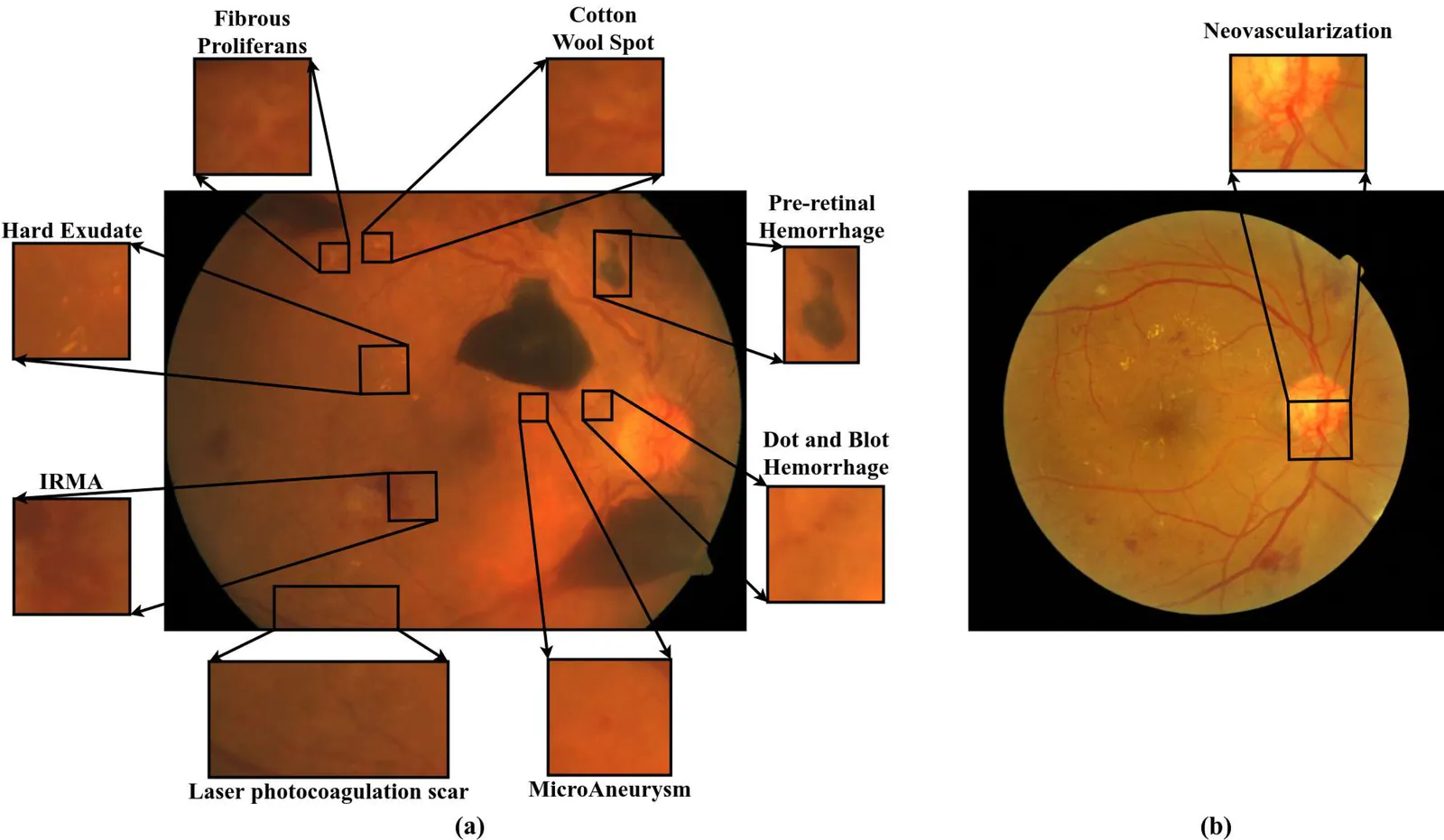

Managing Diabetic Retinopathy with Deep Learning: A Data Centric Overview

Diabetic Retinopathy (DR) is a serious microvascular complication of diabetes, and one of the leading causes of vision loss worldwide. Although automated detection and grading, with Deep Learning (DL), can reduce the burden on ophthalmologists, it is constrained by the limited availability of high-quality datasets. Existing repositories often remain geographically narrow, contain limited samples, and exhibit inconsistent annotations or variable image quality; thereby, restricting their clinical reliability. This paper presents a comprehensive review and comparative analysis of fundus image datasets used in the management of DR. The study evaluates their usability across key tasks, including binary classification, severity grading, lesion localization, and multi-disease screening. It also categorizes the datasets by size, accessibility, and annotation type (such as image-level, lesion-level, and multi-disease). Finally, a recently published dataset is presented as a case study to illustrate broader challenges in dataset curation and usage. The review consolidates current knowledge while highlighting persistent gaps such as the lack of standardized lesion-level annotations and longitudinal data. It also outlines recommendations for future dataset development to support clinically reliable and explainable solutions in DR screening.

2604.02448Apr 2026

View

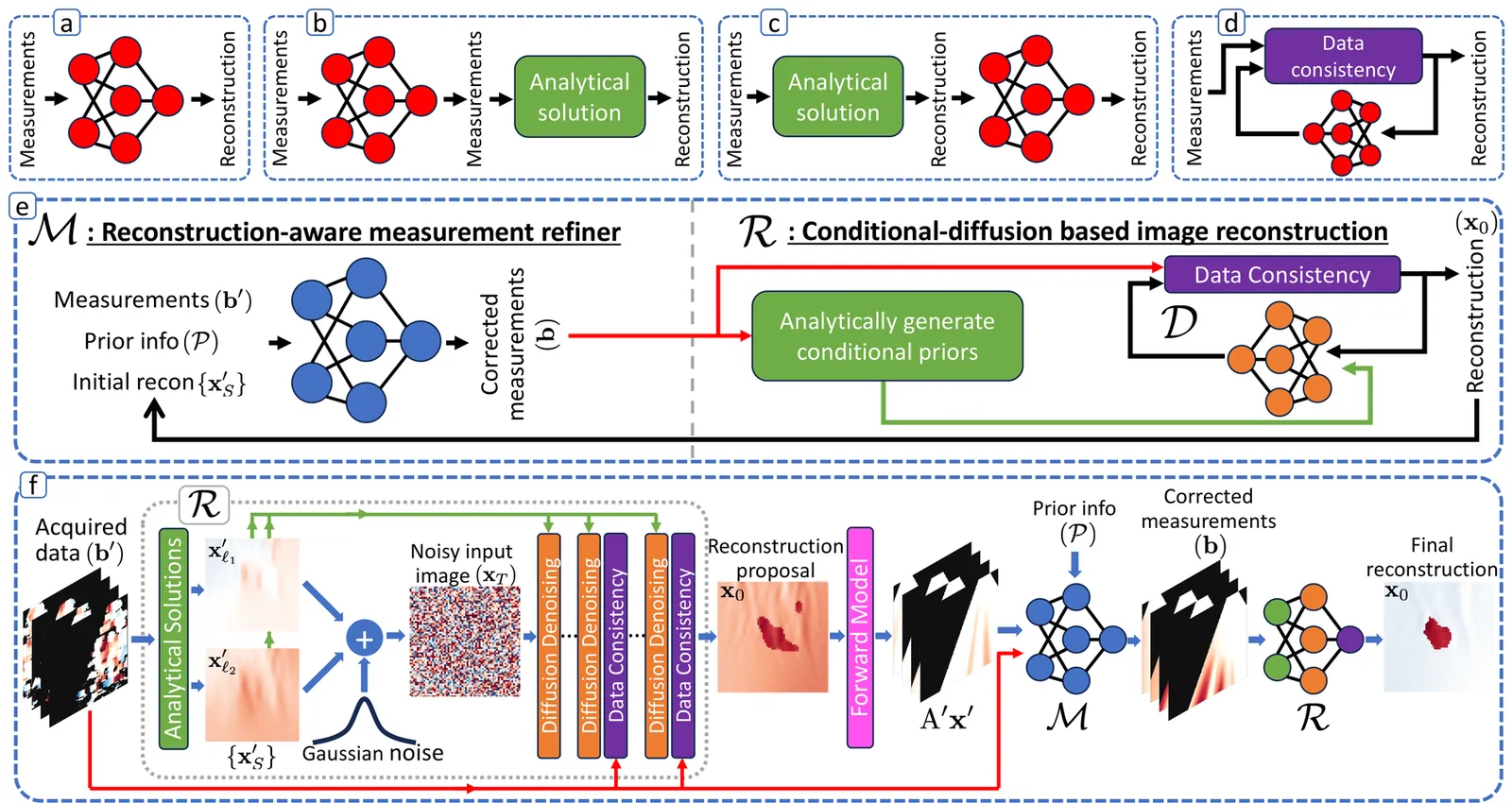

DenOiS: Dual-Domain Denoising of Observation and Solution in Ultrasound Image Reconstruction

Medical imaging aims to recover underlying tissue properties, using inexact (simplified/linearized) imaging models and often from inaccurate and incomplete measurements. Analytical reconstruction methods rely on hand-crafted regularization, sensitive to noise assumptions and parameter tuning. Among deep learning alternatives, plug-and-play (PnP) approaches learn regularization while incorporating imaging physics during inference, outperforming purely data-driven methods. The performance of all these approaches, however, still strongly depends on measurement quality and imaging model accuracy. In this work, we propose DenOiS, a framework that denoises both input observations and resulting solution in their respective domains. It consists of an observation refinement strategy that corrects degraded measurements while compensating for imaging model simplifications, and a diffusion-based PnP reconstruction approach that remains robust under missing measurements. DenOiS enables generalization to real data from training only in simulations, resulting in high-fidelity image reconstruction with noisy observations and inexact imaging models. We demonstrate this for speed-of-sound imaging as a challenging setting of quantitative ultrasound image reconstruction.

2604.02105Apr 2026

View

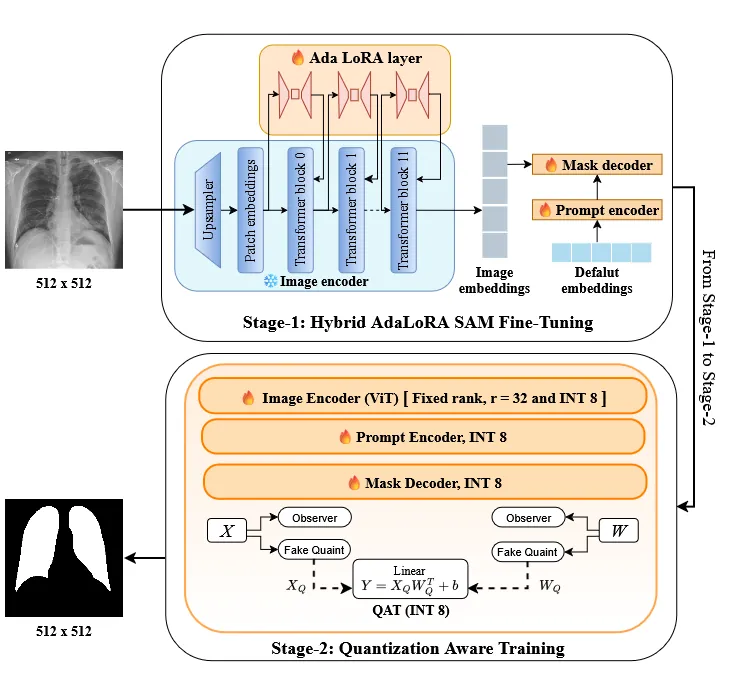

AdaLoRA-QAT: Adaptive Low-Rank and Quantization-Aware Segmentation

Chest X-ray (CXR) segmentation is an important step in computer-aided diagnosis, yet deploying large foundation models in clinical settings remains challenging due to computational constraints. We propose AdaLoRA-QAT, a two-stage fine-tuning framework that combines adaptive low-rank encoder adaptation with full quantization-aware training. Adaptive rank allocation improves parameter efficiency, while selective mixed-precision INT8 quantization preserves structural fidelity crucial for clinical reliability. Evaluated across large-scale CXR datasets, AdaLoRA-QAT achieves 95.6% Dice, matching full-precision SAM decoder fine-tuning while reducing trainable parameters by 16.6\times and yielding 2.24\times model compression. A Wilcoxon signed-rank test confirms that quantization does not significantly degrade segmentation accuracy. These results demonstrate that AdaLoRA-QAT effectively balances accuracy, efficiency, and structural trust-worthiness, enabling compact and deployable foundation models for medical image segmentation. Code and pretrained models are available at: https://prantik-pdeb.github.io/adaloraqat.github.io/

2604.01167Apr 2026

View

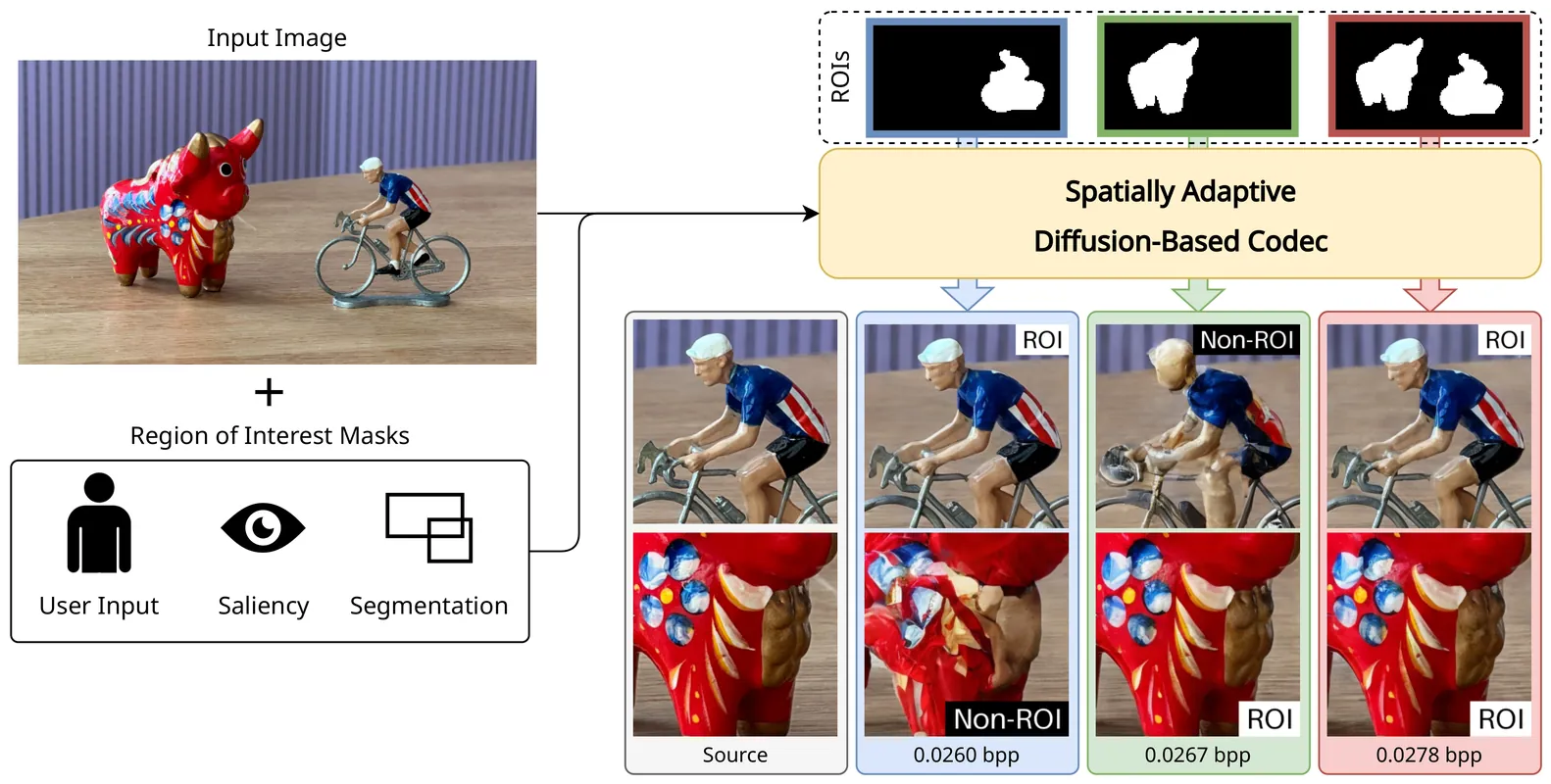

Region-Adaptive Generative Compression with Spatially Varying Diffusion Models

Generative image codecs aim to optimize perceptual quality, producing realistic and detailed reconstructions. However, they often overlook a key property of human vision: our tendency to focus on particular aspects of a visual scene (e.g., salient objects) while giving less importance to other regions. An ideal perceptual codec should be able to exploit this property by allocating more representational capacity to perceptually important areas. To this end, we propose a region-adaptive diffusion-based image codec that supports non-uniform bit allocation within an image. We design a novel spatially varying diffusion model capable of denoising varying amounts of noise per pixel according to arbitrary importance maps. We further identify that these maps can serve as effective priors on the latent representation, and integrate them into our entropy model, improving rate-distortion performance. Built on these contributions, our spatially-adaptive diffusion-based codec outperforms state-of-the-art ROI-controllable baselines in both full-image and ROI-masked perceptual quality.

2604.01122Apr 2026

View

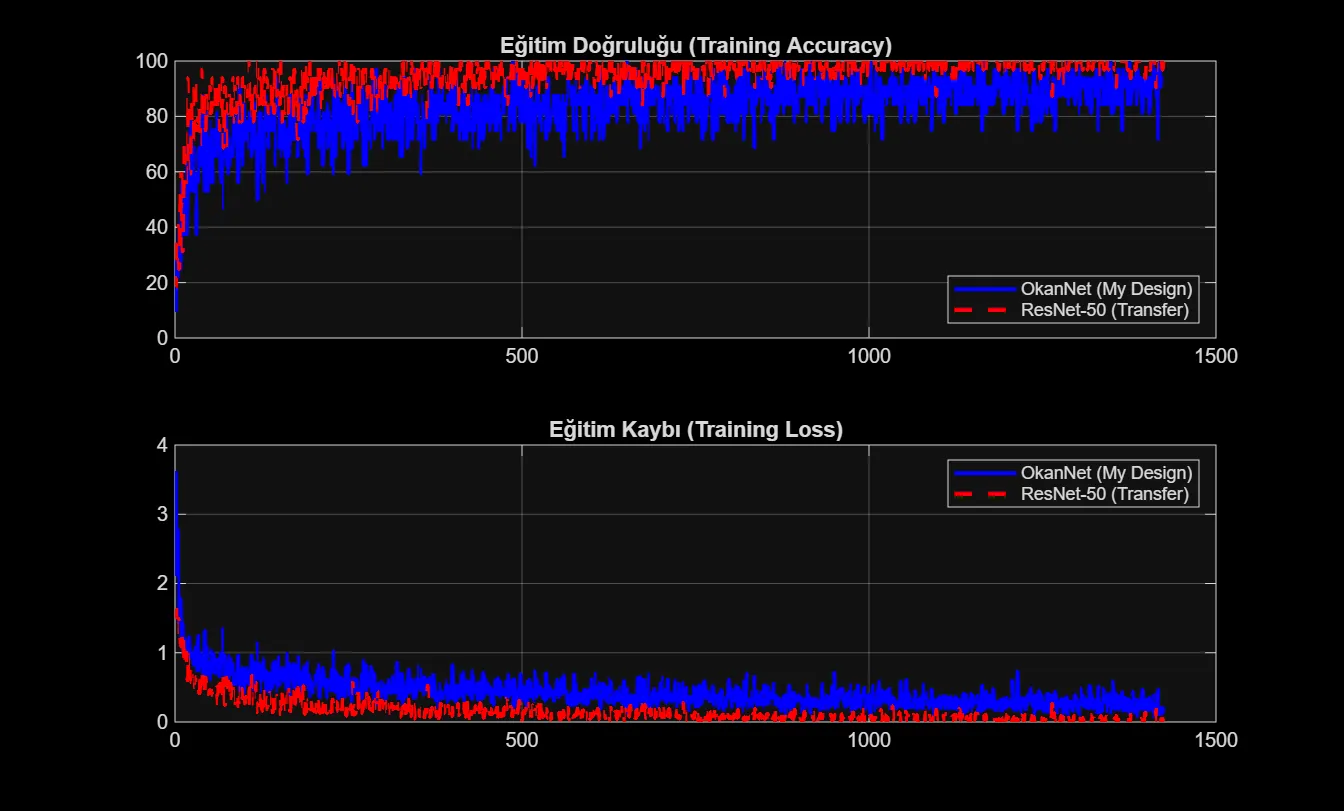

OkanNet: A Lightweight Deep Learning Architecture for Classification of Brain Tumor from MRI Images

Medical imaging techniques, especially Magnetic Resonance Imaging (MRI), are accepted as the gold standard in the diagnosis and treatment planning of neurological diseases. However, the manual analysis of MRI images is a time-consuming process for radiologists and is prone to human error due to fatigue. In this study, two different Deep Learning approaches were developed and analyzed comparatively for the automatic detection and classification of brain tumors (Glioma, Meningioma, Pituitary, and No Tumor). In the first approach, a custom Convolutional Neural Network (CNN) architecture named "OkanNet", which has a low computational cost and fast training time, was designed from scratch. In the second approach, the Transfer Learning method was applied using the 50-layer ResNet-50 [1] architecture, pre-trained on the ImageNet dataset. In experiments conducted on an extended dataset compiled by Masoud Nickparvar containing a total of $7,023$ MRI images, the Transfer Learning-based ResNet-50 model exhibited superior classification performance, achieving $96.49\%$ Accuracy and $0.963$ Precision. In contrast, the custom OkanNet architecture reached an accuracy rate of $88.10\%$; however, it proved to be a strong alternative for mobile and embedded systems with limited computational power by yielding results approximately $3.2$ times faster ($311$ seconds) than ResNet-50 in terms of training time. This study demonstrates the trade-off between model depth and computational efficiency in medical image analysis through experimental data.

2604.01264Apr 2026

View

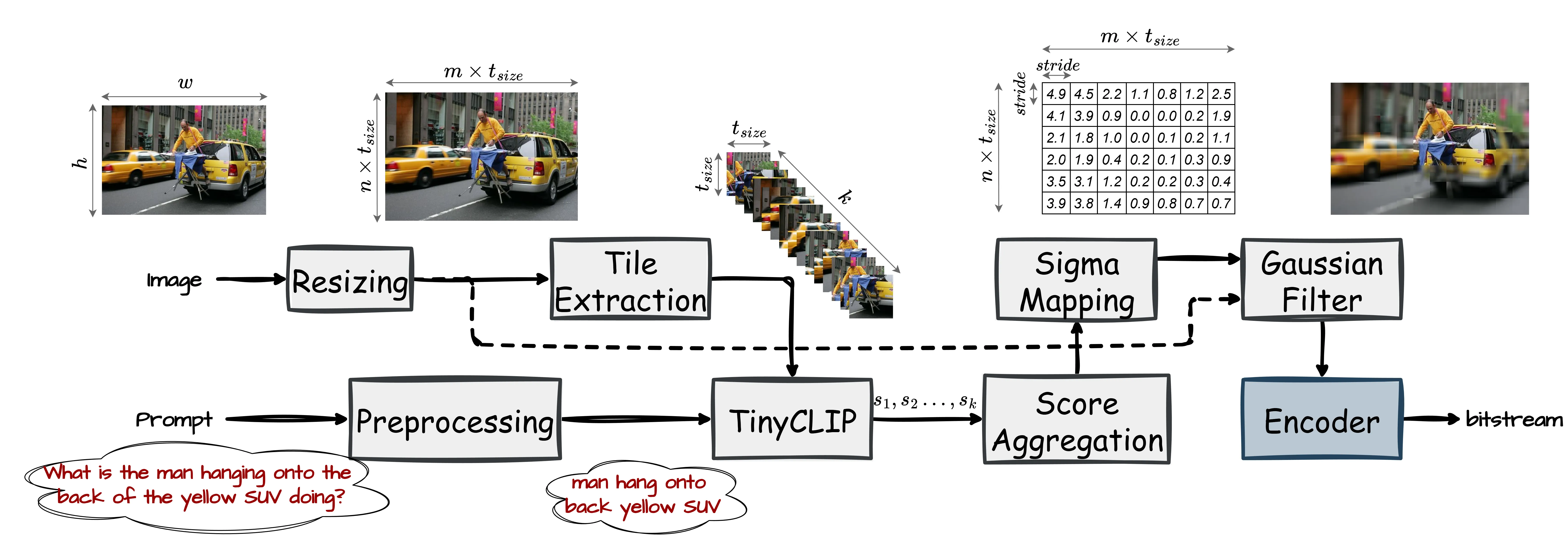

Prompt-Guided Prefiltering for VLM Image Compression

The rapid progress of large Vision-Language Models (VLMs) has enabled a wide range of applications, such as image understanding and Visual Question Answering (VQA). Query images are often uploaded to the cloud, where VLMs are typically hosted, hence efficient image compression becomes crucial. However, traditional human-centric codecs are suboptimal in this setting because they preserve many task-irrelevant details. Existing Image Coding for Machines (ICM) methods also fall short, as they assume a fixed set of downstream tasks and cannot adapt to prompt-driven VLMs with an open-ended variety of objectives. We propose a lightweight, plug-and-play, prompt-guided prefiltering module to identify image regions most relevant to the text prompt, and consequently to the downstream task. The module preserves important details while smoothing out less relevant areas to improve compression efficiency. It is codec-agnostic and can be applied before conventional and learned encoders. Experiments on several VQA benchmarks show that our approach achieves a 25-50% average bitrate reduction while maintaining the same task accuracy. Our source code is available at https://github.com/bardia-az/pgp-vlm-compression.

2604.00314Mar 2026

ViewPage 1 of 218