Trending in Economic Theory

Procurement without Priors: A Simple Mechanism and its Notable Performance

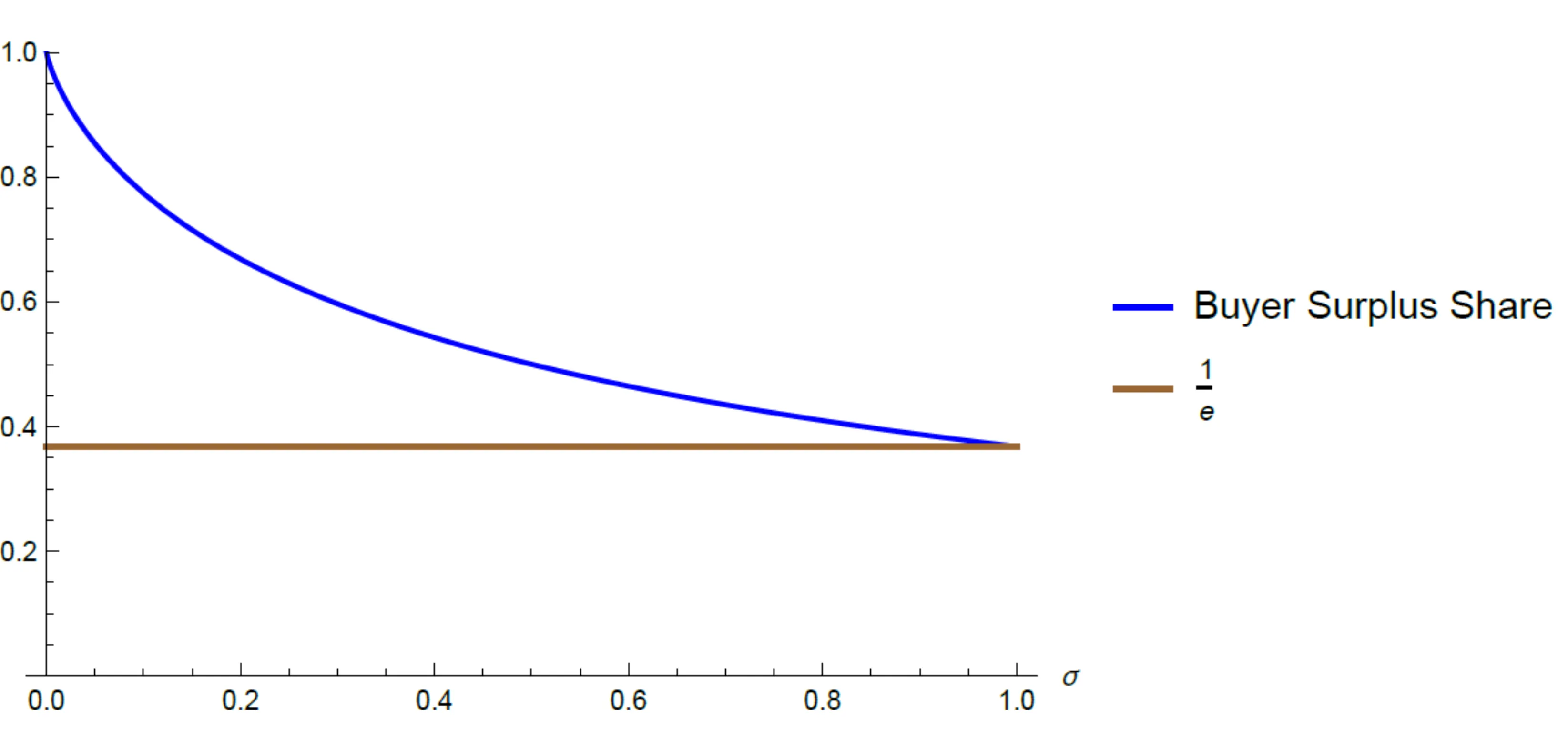

How should a buyer design procurement mechanisms when suppliers' costs are unknown, and the buyer does not have a prior belief? We demonstrate that simple mechanisms - that share a constant fraction of the buyer utility with the seller - allow the buyer to realize a guaranteed positive fraction of the efficient social surplus across all possible costs. Moreover, a judicious choice of the share based on the known demand maximizes the surplus ratio guarantee that can be attained across all possible (arbitrarily complex and nonlinear) mechanisms and cost functions. Similar results hold in related nonlinear pricing and optimal regulation problems.

2512.091291

Dec 2025Theoretical Economics

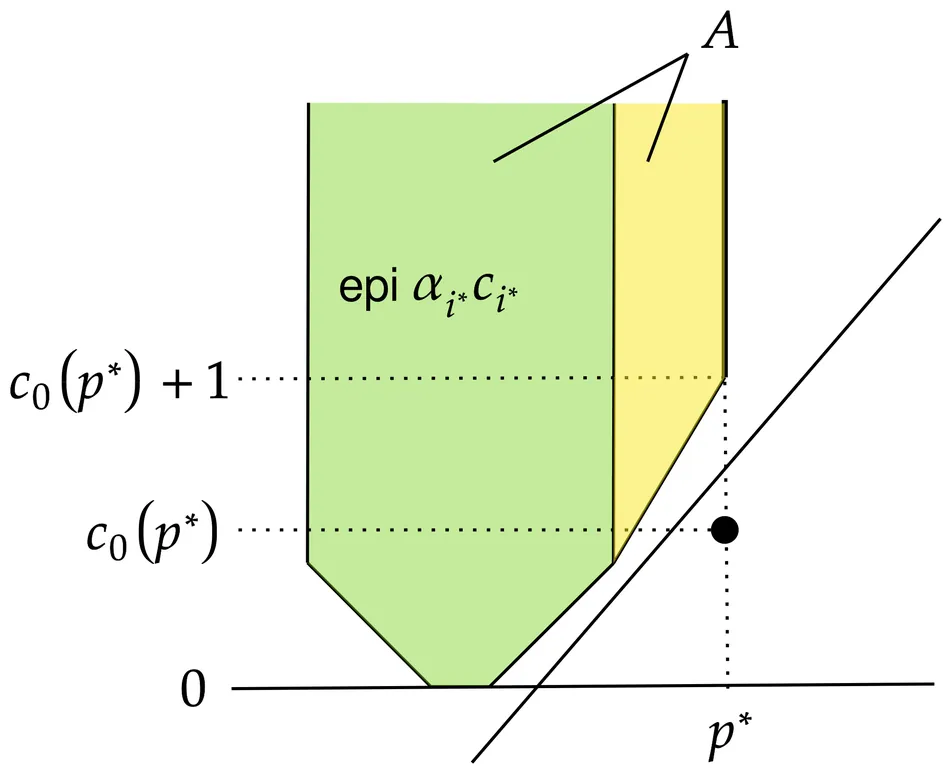

Regulating a Monopolist without Subsidy

We study monopoly regulation under asymmetric information about costs when subsidies are infeasible. A monopolist with privately known marginal cost serves a single product market and sets a price. The regulator maximizes a weighted welfare function using unit taxes as sole policy instrument. We identify a sufficient and necessary condition for when laissez-faire is optimal. When intervention is desired, we provide simple sufficient conditions under which the optimal policy is a progressive price cap: prices below a benchmark face no tax, while higher prices are taxed at increasing and potentially prohibitive rates. This policy combines delegation at low prices with taxation at high prices, balancing access, affordability, and profitability. Our results clarify when taxes act as complements to subsidies and when they serve only as imperfect substitutes, illuminating how feasible policy instruments shape optimal regulatory design.

2512.065251

Dec 2025Theoretical Economics

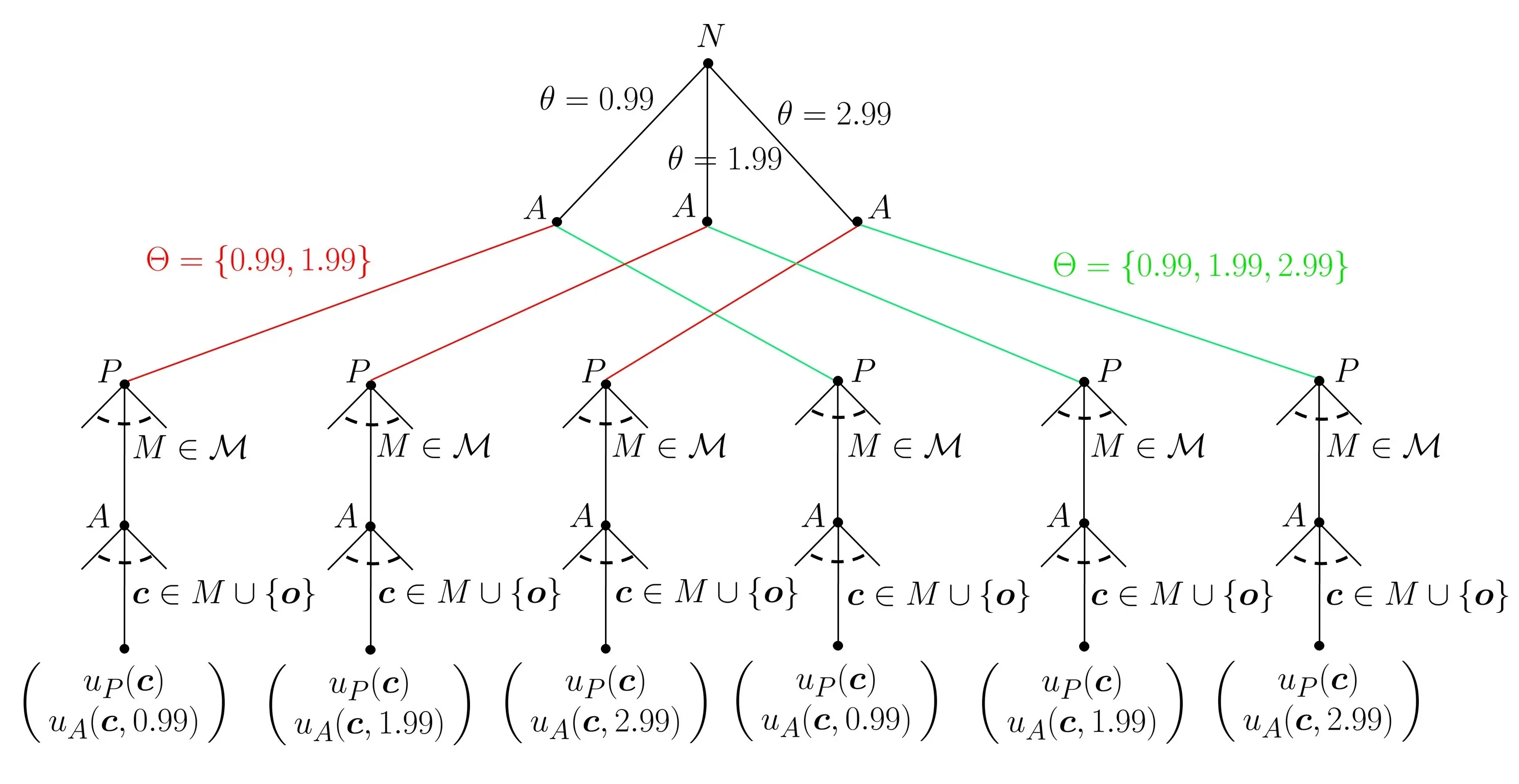

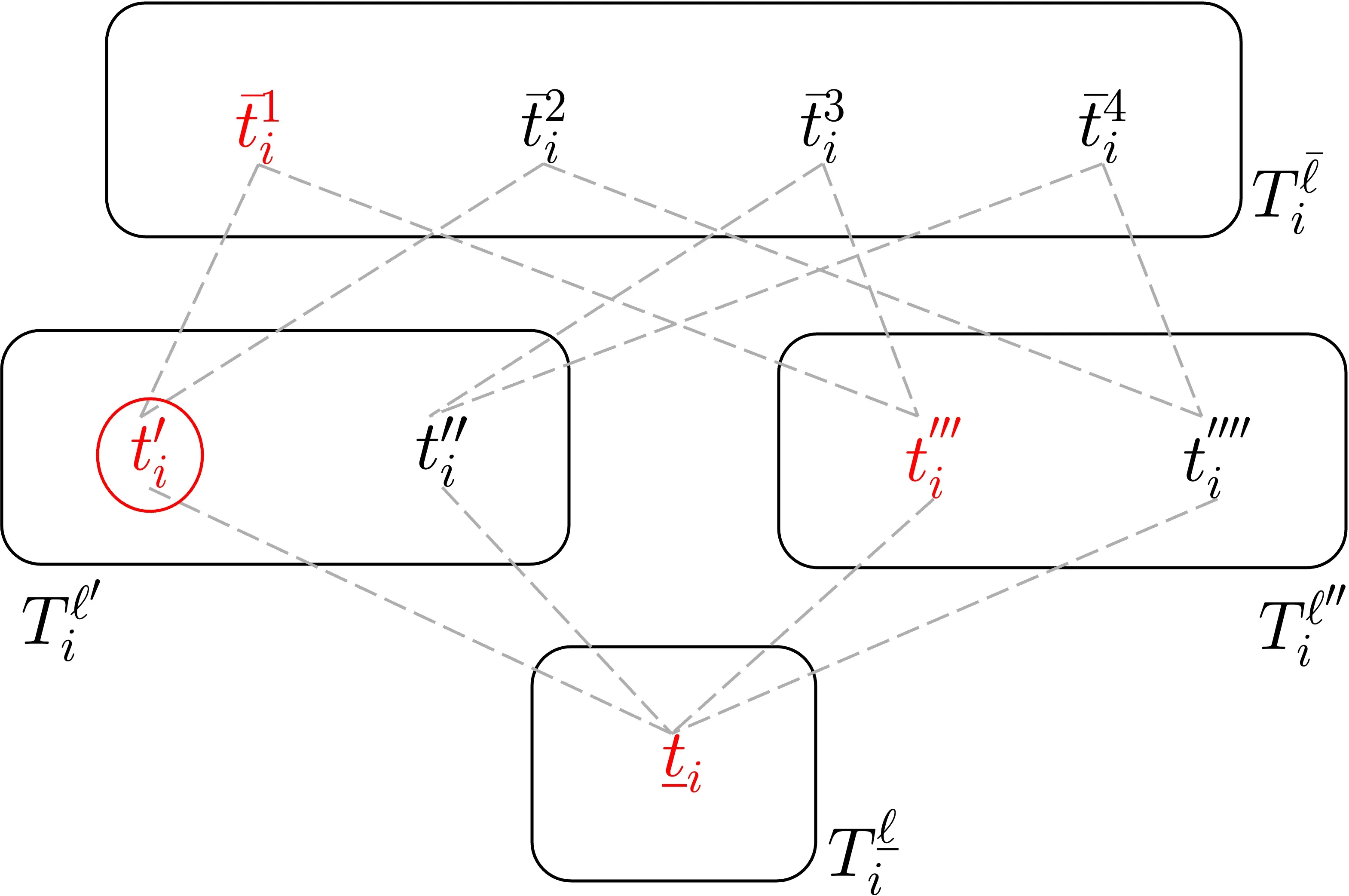

Rationalizable Screening and Disclosure under Unawareness

We analyze a principal-agent procurement problem in which the principal (she) is unaware some of the marginal cost types of the agent (he). Communication arises naturally as some types of the agent may have an incentive to raise the principal's awareness (totally or partially) before a contract menu is offered. The resulting menu must not only reflect the principal's change in awareness, but also her learning about types from the agent's decision to raise her awareness in the first place. We capture this reasoning in a discrete concave model via a rationalizability procedure in which marginal beliefs over types are restricted to log-concavity, ``reverse'' Bayesianism, and mild assumptions of caution. We show that if the principal is ex ante only unaware of high-cost types, all of these types have an incentive raise her awareness of them -- otherwise, they would not be served. With three types, the two lower-cost types that the principal is initially aware of also want to raise her awareness of the high-cost type: Their quantities suffer no additional distortions and they both earn an extra information rent. Intuitively, the presence of an even higher cost type makes the original two look better. With more than three types, we show that this intuition may break down for types of whom the principal is initially aware of so that raising the principal's awareness could cease to be profitable for those types. When the principal is ex ante only unaware of more efficient (low-cost) types, then \textit{no type} raises her awareness, leaving her none the wiser.

2510.2091813

Oct 2025Theoretical Economics

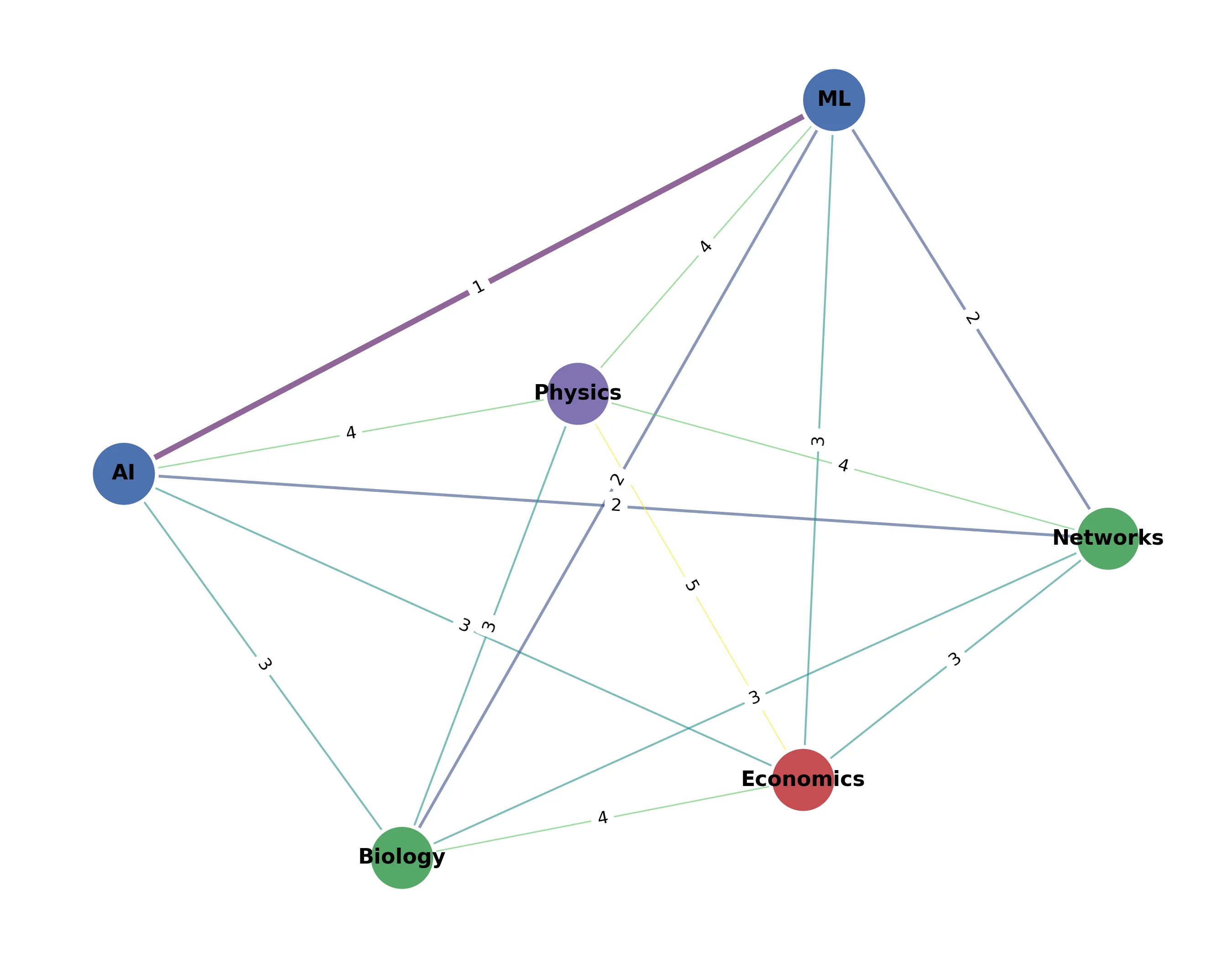

The Economics of AI Foundation Models: Openness, Competition, and Governance

The strategic choice of model "openness" has become a defining issue for the foundation model (FM) ecosystem. While this choice is intensely debated, its underlying economic drivers remain underexplored. We construct a two-period game-theoretic model to analyze how openness shapes competition in an AI value chain, featuring an incumbent developer, a downstream deployer, and an entrant developer. Openness exerts a dual effect: it amplifies knowledge spillovers to the entrant, but it also enhances the incumbent's advantage through a "data flywheel effect," whereby greater user engagement today further lowers the deployer's future fine-tuning cost. Our analysis reveals that the incumbent's optimal first-period openness is surprisingly non-monotonic in the strength of the data flywheel effect. When the data flywheel effect is either weak or very strong, the incumbent prefers a higher level of openness; however, for an intermediate range, it strategically restricts openness to impair the entrant's learning. This dynamic gives rise to an "openness trap," a critical policy paradox where transparency mandates can backfire by removing firms' strategic flexibility, reducing investment, and lowering welfare. We extend the model to show that other common interventions can be similarly ineffective. Vertical integration, for instance, only benefits the ecosystem when the data flywheel effect is strong enough to overcome the loss of a potentially more efficient competitor. Likewise, government subsidies intended to spur adoption can be captured entirely by the incumbent through strategic price and openness adjustments, leaving the rest of the value chain worse off. By modeling the developer's strategic response to competitive and regulatory pressures, we provide a robust framework for analyzing competition and designing effective policy in the complex and rapidly evolving FM ecosystem.

2510.152004

Oct 2025Theoretical Economics

2508.12934

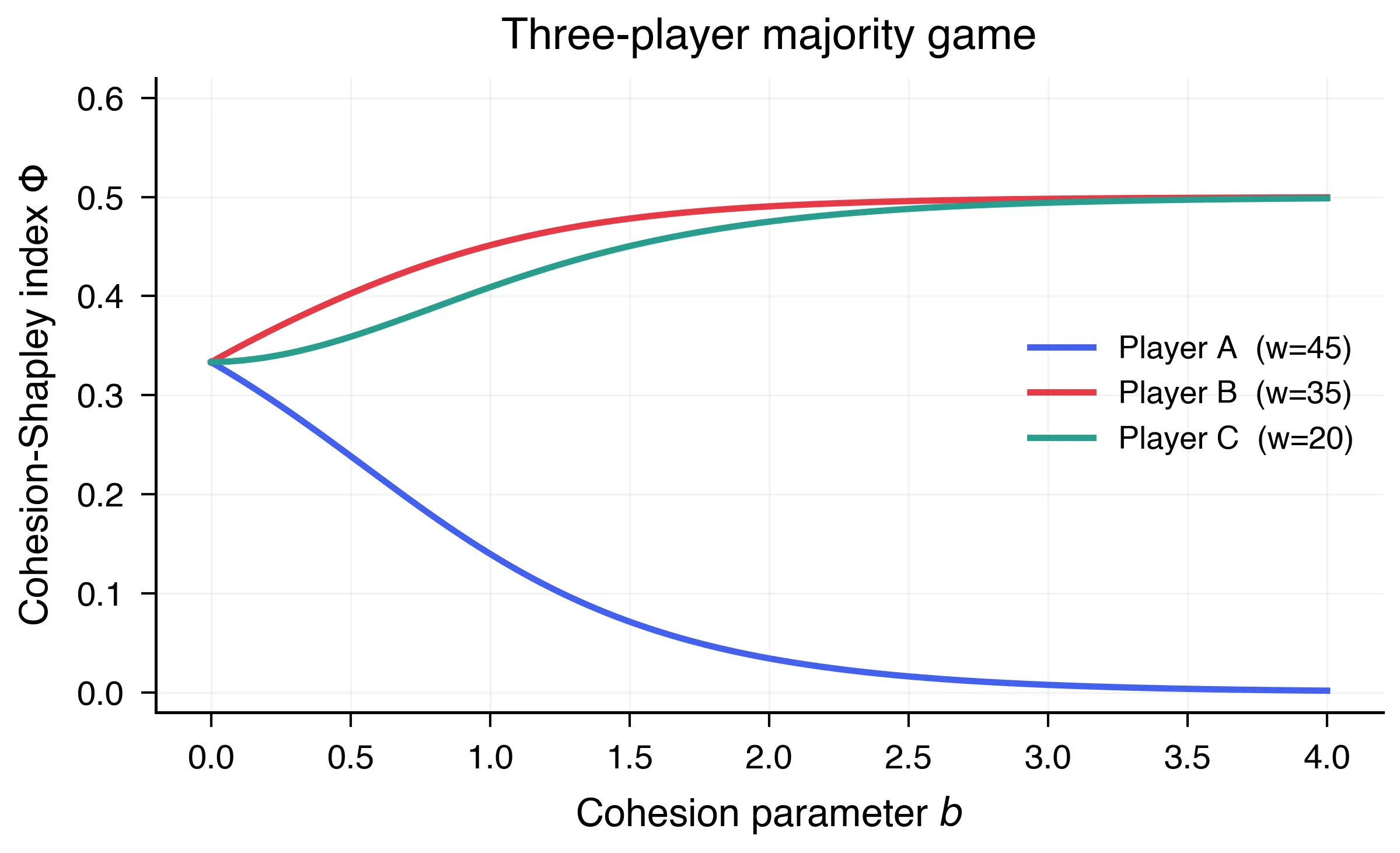

2508.12934Contest success functions with(out) headstarts

Contest success function (CSF) maps contestants' efforts to their winning probability. This paper provides axiomatizations of CSFs with headstarts. The results extend the classic axiomatization of the Tullock CSF and connect to CSFs that allow for draws. The central axiom is relative homogeneity of counterfactual deviation, which requires the pairwise influence of one contestant's effort on opponent's probabilistic allocation to be scale-invariant. Two fairness axioms and no advantageous reallocation further restrict the admissible functional forms with headstarts. We also introduce dummy consistency, requiring allocations to be consistent with and without inactive contestants, to clarify the relationship with earlier axiomatic work that rules out headstarts. Finally, we discuss an extension that drops the assumption of full allocation.

2508.129341

Aug 2025Theoretical Economics

When is it (im)possible to respect all individuals' preferences under uncertainty?

When aggregating Subjective Expected Utility preferences, the Pareto principle leads to an impossibility result unless the individuals have a common belief. This paper examines the source of this impossibility in more detail by considering the aggregation of a general class of incomplete preferences that can represent gradual ambiguity perceptions. Our result shows that the planner cannot avoid ignoring some individuals unless there is a probability distribution that all individuals agree is most plausible. This means that even if individuals have similar ambiguity perceptions, the impossibility persists as long as some individual's most plausible belief differs even slightly from that of others.

2508.125421

Aug 2025Theoretical Economics

2508.02171

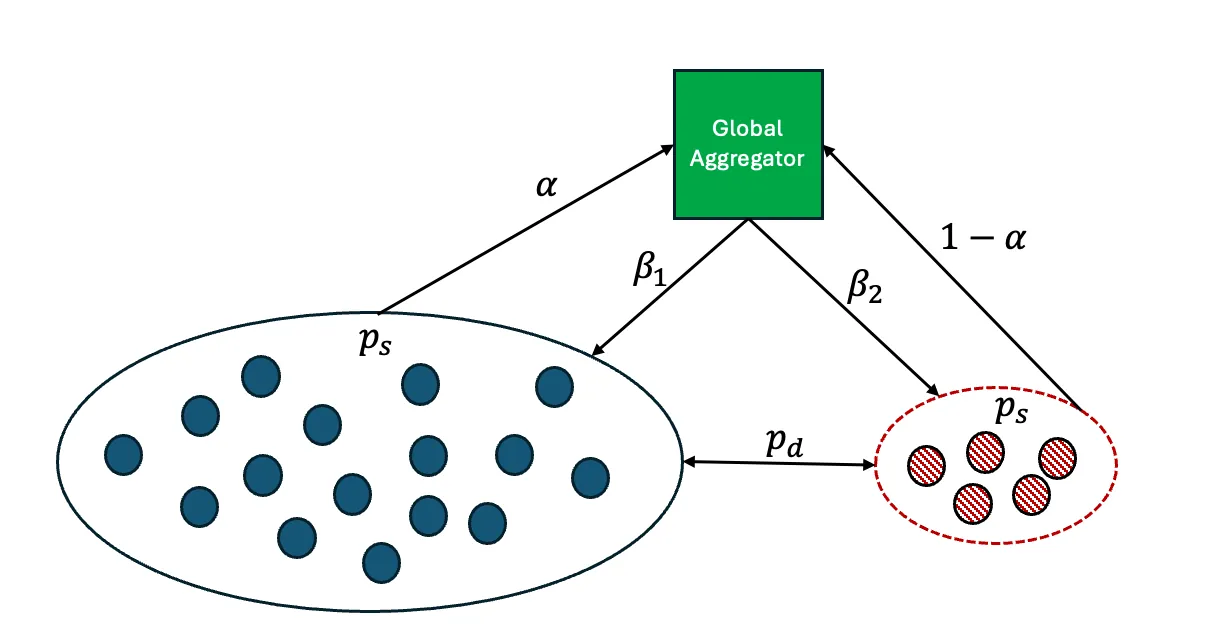

2508.02171Optimal Transfer Mechanism for Municipal Soft-Budget Constraints in Newfoundland

Newfoundland and Labrador's municipalities face severe soft budget pressures due to narrow tax bases, high fixed service costs, and volatile resource revenues. We develop a Stackelberg style mechanism design model in which the province commits at t = 0 to an ex ante grant schedule and an ex post bailout rule. Municipalities privately observe their fiscal need type, choose effort, investment, and debt, and may receive bailouts when deficits exceed a statutory threshold. Under convexity and single crossing, the problem reduces to one dimensional screening and admits a tractable transfer mechanism with quadratic bailout costs and a statutory cap. The optimal ex ante rule is threshold-cap; under discretionary rescue at t = 2, it becomes threshold-linear-cap. A knife-edge inequality yields a self-consistent no bailout regime, and an explicit discount factor threshold renders hard budgets dynamically credible. We emphasize a class of monotone threshold signal rules; under this class, grant crowd out is null almost everywhere, which justifies the constant grant weight used in closed form expressions. The closed form characterization provides a policy template that maps to Newfoundland's institutions and clarifies the micro-data required for future calibration.

2508.021711

Aug 2025Theoretical Economics

Comment on 'Asset Bubbles and Overlapping Generations'

Tirole (1985) studied an overlapping generations model with capital accumulation and showed that the emergence of asset bubbles solves the capital over-accumulation problem. His Proposition 1(c) claims that if the dividend growth rate is above the bubbleless interest rate (the steady-state interest rate in the economy without the asset) but below the population growth rate, then bubbles are necessary in the sense that there exists no bubbleless equilibrium but there exists a unique bubbly equilibrium. We show that this result (as stated) is incorrect by presenting an example economy that satisfies all assumptions of Proposition 1(c) but its unique equilibrium is bubbleless. We also restore Proposition 1(c) under the additional assumptions that initial capital is sufficiently large and dividends are sufficiently small. We show through examples that these conditions are essential.

2507.124771

Jul 2025Theoretical Economics

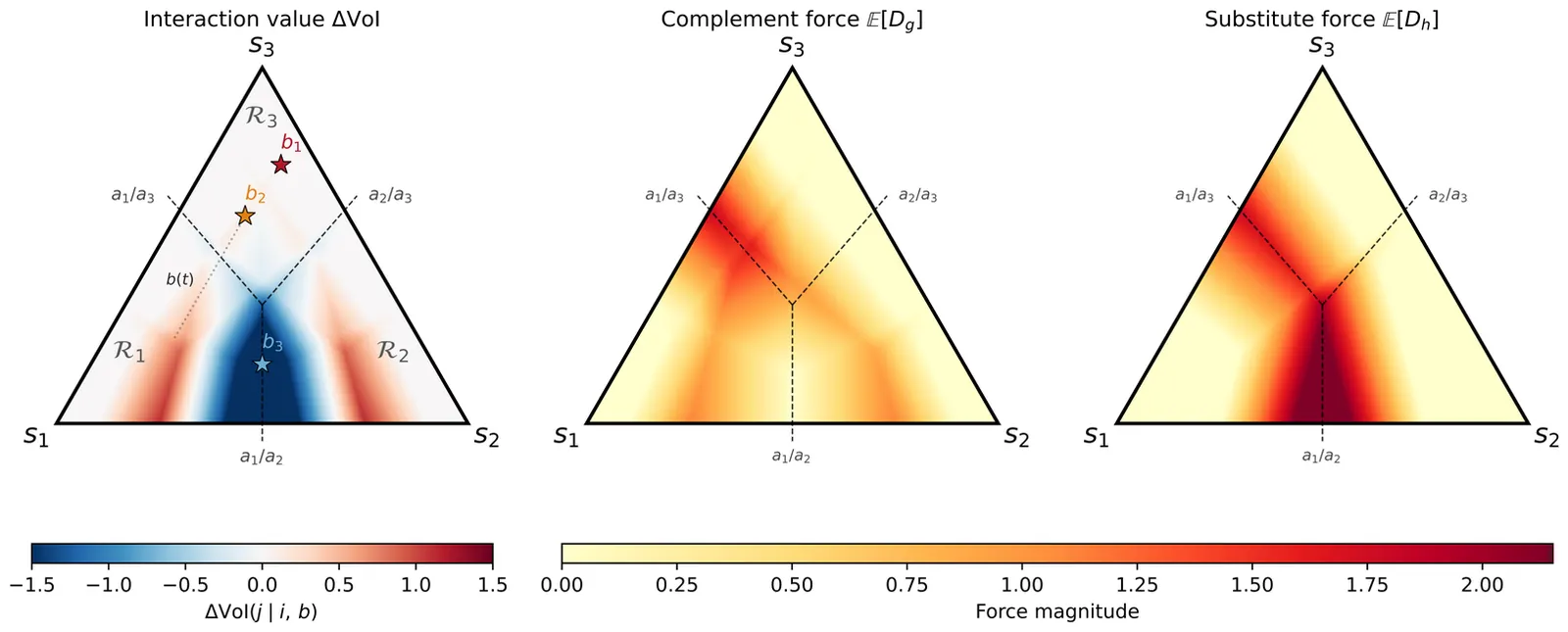

Interactions across multiple games: cooperation, corruption, and organizational design

Teamwork is vital in many settings, and it is socially beneficial for teams to cooperate in some situations (``good games'') and not in others (``bad games;'' e.g., those that allow for corruption). A team's cooperation in any given game depends on expectations of cooperation in future iterations of both good and bad games. We identify when sustaining cooperation on good games necessitates cooperation on bad games. We then characterize how a designer should optimally assign workers to teams and teams to tasks that involve varying arrival rates of good and bad games. Our results show how organizational design can be used to promote cooperation while minimizing corruption.

2507.030301

Jul 2025Theoretical Economics

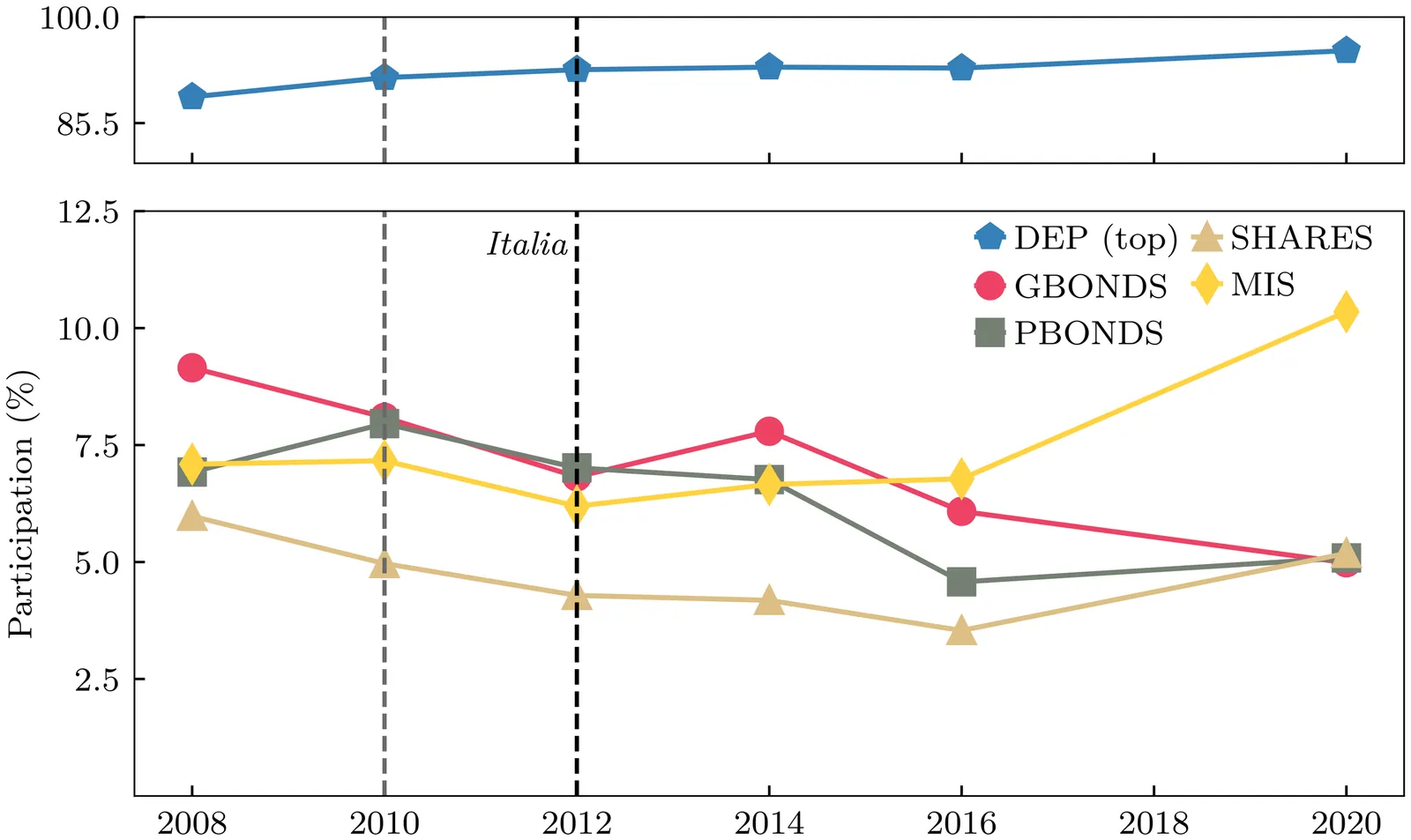

Who's in? Household-targeted Government Policies and the Role of Financial Literacy in Market Participation

This paper examines how household-targeted government policies influence financial market participation conditional on financial literacy, focusing on potential Central Bank Digital Currency (CBDC) adoption. Due to the lack of empirical CBDC data, I use the 2012 introduction of retail Treasury bonds in Italy as a proxy to study how financial literacy affects households' likelihood to engage with a new government-backed retail instrument. Using the Bank of Italy's Survey on Household Income and Wealth, I show that households with some but low financial literacy are more likely to participate in the Treasury bond market than other groups following the introduction of the new instrument. Based on these findings, I develop a theoretical model to study how financial literacy affects CBDC demand through portfolio choice: low-literate households with limited access to risky assets allocate more wealth to CBDC, while high-literate households use risky assets to safeguard against income risk. These results highlight the role of financial literacy in shaping portfolio choices and CBDC adoption.

2506.125751

Jun 2025Theoretical Economics

2506.12337

2506.12337Artificial Intelligence in Team Dynamics: Who Gets Replaced and Why?

This study investigates the effects of artificial intelligence (AI) adoption in organizations. We ask: First, how should a principal optimally deploy limited AI resources to replace workers in a team? Second, in a sequential workflow, which workers face the highest risk of AI replacement? Third, would the principal always prefer to fully utilize all available AI resources, or are there any benefits to keeping some slack AI capacity? Fourth, what are the effects of optimal AI deployment on the wage level and intra-team wage inequality? We develop a sequential team production model in which a principal can use peer monitoring--where each worker observes the effort of their predecessor--to discipline team members. The principal may replace some workers with AI agents, whose actions are not subject to moral hazard. Our analysis yields four key results. First, the optimal AI strategy stochastically replaces workers rather than fixating on a single position. Second, the principal replaces workers at the beginning and at the end of the workflow, but does not replace the middle worker, since this worker is crucial for sustaining the flow of information obtained by peer monitoring. Third, the principal may optimally underutilize available AI capacity. Fourth, the optimal AI adoption increases average wages and reduces intra-team wage inequality.

2506.123373

Jun 2025Theoretical Economics

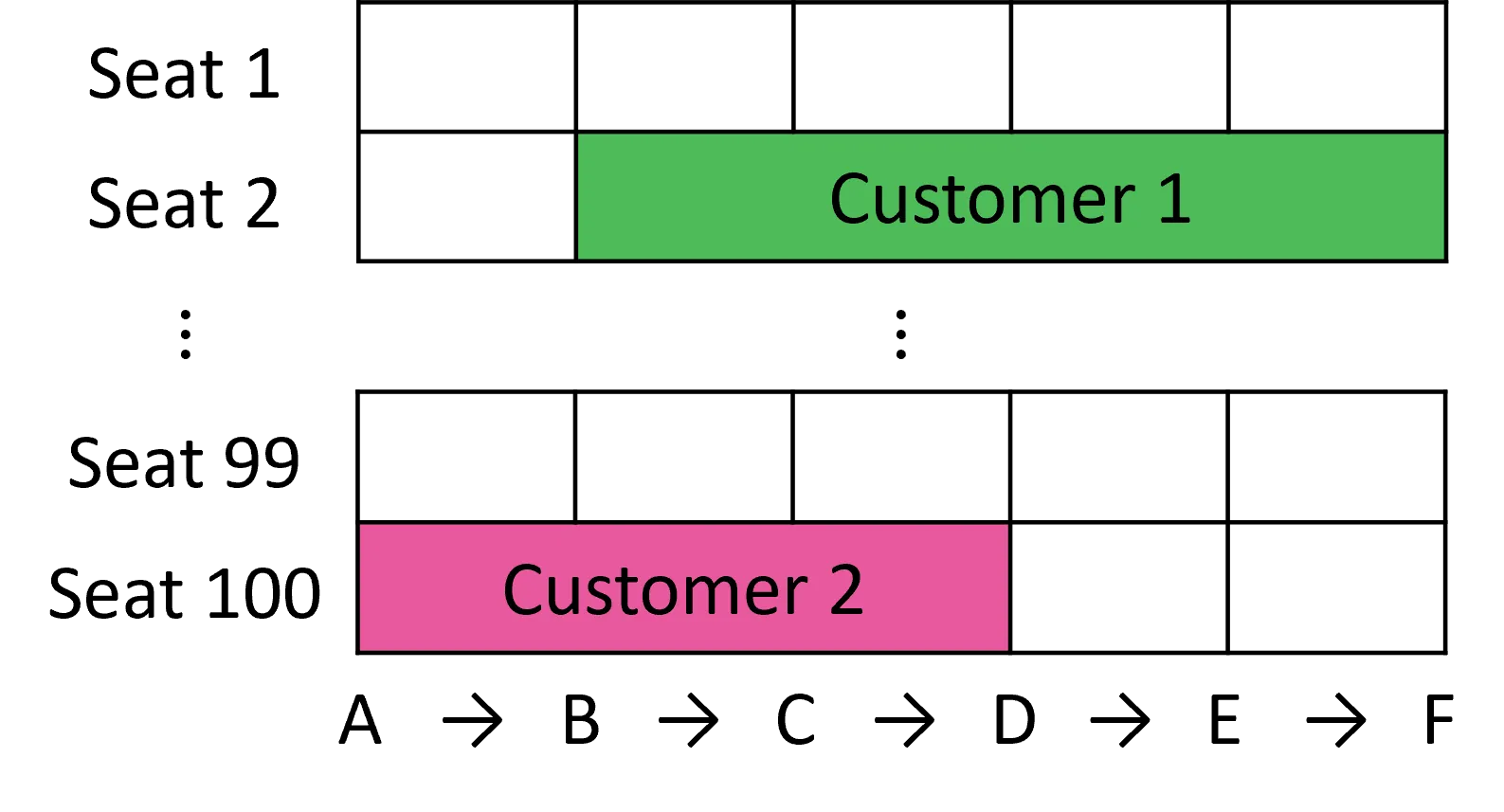

Constant-Factor Algorithms for Revenue Management with Consecutive Stays

We study network revenue management problems motivated by applications such as railway ticket sales and hotel room bookings. Requests, each requiring a resource for a consecutive stay, arrive sequentially with known arrival probabilities. We investigate two scenarios: the accept-or-reject scenario, where a request can be fulfilled by assigning any available resource; and the BAM-based scenario, which generalizes the former by incorporating customer preferences through the basic attraction model (BAM), allowing the platform to offer an assortment of available resources from which the customer may choose. We develop polynomial-time policies and evaluate their performance using approximation ratios, defined as the ratio between the expected revenue of our policy and that of the optimal online algorithm. When each arrival has a fixed request type (e.g., the interval of the stay is fixed), we establish constant-factor guarantees: a ratio of 1 - 1/e for the accept-or-reject scenario and 0.25 for the BAM-based scenario. We further extend these results to the case where the request type is random (e.g., the interval of the stay is random). In this setting, the approximation ratios incur an additional multiplicative factor of 1 - 1/e, resulting in guarantees of at least 0.399 for the accept-or-reject scenario and 0.156 for the BAM-based scenario. These constant-factor guarantees stand in sharp contrast to the prior nonconstant competitive ratios that are benchmarked against the offline optimum.

2506.009091

Jun 2025Theoretical Economics

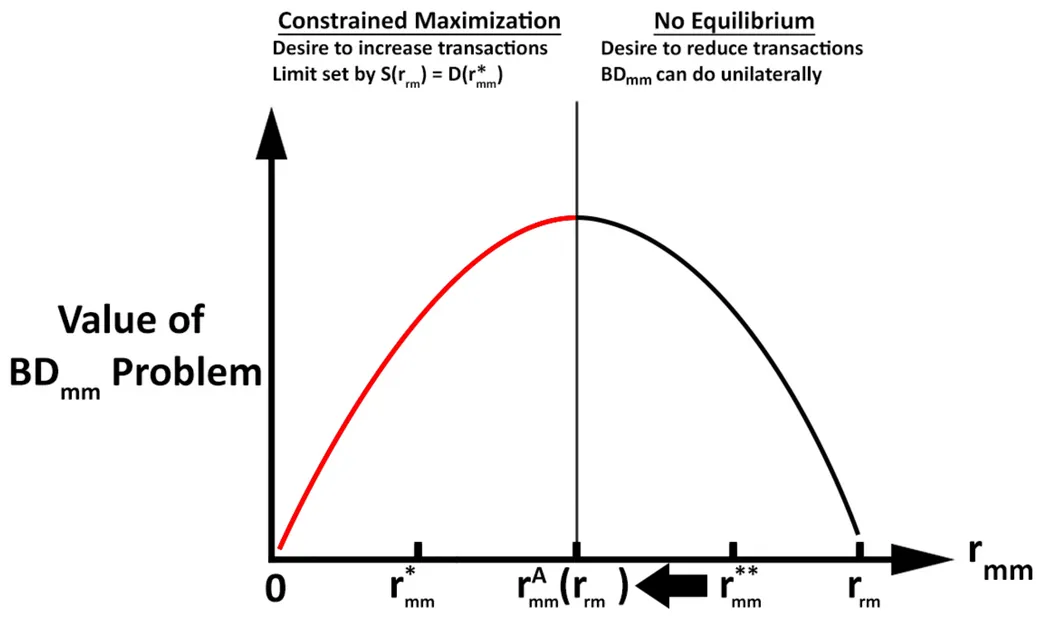

A Smart-Contract to Resolve Multiple Equilibrium in Intermediated Trade

We present a model of a market that is intermediated by broker-dealers where there is multiple equilibrium. We then design a smart-contract that receives messages and algorithmically sends trading instructions. The smart-contract resolves the multiple equilibrium by implementing broker-dealer joint profit maximization as a Nash equilibrium. This outcome relies upon several factors: Agent commitments to follow the smart contract protocol; selective privacy of information; a structured timing of trade offers and acceptances and, crucially, trust that the smart-contract will execute the correct algorithm. Commitment is achieved by a legal contract or contingent deposit that incentivizes agents to comply with the protocol. Privacy is maintained by using fully homomorphic encryption. Multiple equilibrium is resolved by imposing a sequential ordering of trade offers and acceptances, and trust in the smart-contract is achieved by appending the smart-contract to a public blockchain, thereby enabling verification of its computations. This model serves as an example of how a smart-contract implemented with cryptography and blockchain can improve market outcomes.

2505.229401

May 2025Theoretical Economics

Optimal Auction Design for Dynamic Stochastic Environments: Myerson Meets Naor

Motivated by applications such as cloud computing, gig platforms, and blockchain auctions, we study optimal selling mechanisms for dynamic markets with stochastic supply and demand. In our model, buyers with private valuations and homogeneous goods arrive stochastically and can be held in queues at a cost. The optimal mechanism pairs allocative efficiency with dynamic admission control: goods are assigned to the highest-value buyer, while entry is restricted by value thresholds that strictly increase with the queue length and decrease with available inventory. This policy smooths competitive pressure across time and is implemented in dominant strategies via auctions with dynamic reserve prices.

2505.228621

May 2025Theoretical Economics

Berk-Nash Rationalizability

We study learning in complete-information games, allowing the players' models of their environment to be misspecified. We introduce Berk--Nash rationalizability: the largest self-justified set of actions -- meaning each action in the set is optimal under some belief that is a best fit to outcomes generated by joint play within the set. We show that, in a model where players learn from past actions, every action played (or approached) infinitely often lies in this set. When players have a correct model of their environment, Berk--Nash rationalizability refines (correlated) rationalizability and coincides with it in two-player games. The concept delivers predictions on long-run behavior regardless of whether actions converge or not, thereby providing a practical alternative to proving convergence or solving complex stochastic learning dynamics. For example, if the rationalizable set is a singleton, actions converge almost surely.

2505.207081

May 2025Theoretical Economics

Pricing AI Model Accuracy

This paper examines the market for AI models in which firms compete to provide accurate model predictions and consumers exhibit heterogeneous preferences for model accuracy. We develop a consumer-firm duopoly model to analyze how competition affects firms' incentives to improve model accuracy. Each firm aims to minimize its model's error, but this choice can often be suboptimal. Counterintuitively, we find that in a competitive market, firms that improve overall accuracy do not necessarily improve their profits. Rather, each firm's optimal decision is to invest further on the error dimension where it has a competitive advantage. By decomposing model errors into false positive and false negative rates, firms can reduce errors in each dimension through investments. Firms are strictly better off investing on their superior dimension and strictly worse off with investments on their inferior dimension. Profitable investments adversely affect consumers but increase overall welfare.

2504.133751

Apr 2025Theoretical Economics

2504.07401

2504.07401Robust Aggregation of Preferences

This paper analyzes a society composed of individuals who have diverse sets of beliefs (or models) and diverse tastes (or utility functions). It characterizes the model selection process of a social planner who wishes to aggregate individuals' beliefs and tastes but is concerned that their beliefs are misspecified (or incorrect). A novel impossibility result emerges under several desiderata: a utilitarian social planner who prioritizes robustness to misspecification never aggregates individuals' beliefs but instead behaves as a dictator by adopting one individual's belief as the social belief. This tension between robustness and aggregation exists because aggregation yields policy-contingent beliefs, which are very sensitive to policy outcomes. The impossibility can be resolved, but it would require assuming individuals have heterogeneous tastes and some common beliefs. Applications in treatment choice and dynamic macroeconomics are explored.

2504.074012

Apr 2025Theoretical Economics

2504.06127

2504.06127Optimal classification with endogenous behavior

I consider the problem of classifying individual behavior in a simple setting of outcome performativity where the behavior the algorithm seeks to classify is itself dependent on the algorithm. I show in this context that the most accurate classifier is either a threshold or a negative threshold rule. A threshold rule offers the "good" classification to those individuals more likely to have engaged in a desirable behavior, while a negative threshold rule offers the "good" outcome to those less likely to have engaged in the desirable behavior. While seemingly pathological, I show that a negative threshold rule can maximize classification accuracy when behavior is endogenous. I provide an example of such a classifier and extend the analysis to more general algorithm objectives. A key takeaway is that when behavior is endogenous to classification, optimal classification can negatively correlate with signal information. This may yield negative downstream effects on groups in terms of the aggregate behavior induced by an algorithm.

2504.061271

Apr 2025Theoretical Economics

Efficient Mechanisms under Unawareness

We study the design of efficient mechanisms under asymmetric awareness and information. Unawareness refers to the lack of conception rather than the lack of information. Assuming quasi-linear utilities and private values, we show that we can implement in conditional dominant strategies a social choice function that is utilitarian ex-post efficient when pooling all awareness of all agents without the need of the social planner being fully aware ex-ante. To this end, we develop novel dynamic versions of Vickrey-Clarke-Groves mechanisms in which types are revealed and subsequently elaborated at endogenous higher awareness levels. We explore how asymmetric awareness affects budget balance and participation constraints. We show that ex-ante unforeseen contingencies are no excuse for deficits. Finally, we propose a modified reverse second price auction for efficient procurement of complex incompletely specified projects.

2504.043825

Apr 2025Theoretical Economics

2503.18144

2503.18144Shapley-Scarf Markets with Objective Indifferences

Top trading cycles with fixed tie-breaking (TTC) has been suggested to deal with indifferences in object allocation problems. Unfortunately, under general indifferences, TTC is neither Pareto efficient nor group strategy-proof. Furthermore, it may not select an allocation in the core of the market, even when the core is non-empty. However, when indifferences are agreed upon by all agents (``objective indifferences''), TTC maintains Pareto efficiency, group strategy-proofness, and core selection. Further, we characterize objective indifferences as the most general setting where TTC maintains these properties.

2503.181441

Mar 2025Theoretical Economics